What Is the Experience Signal in E-E-A-T? A Practical Guide for SEO

AI Summary

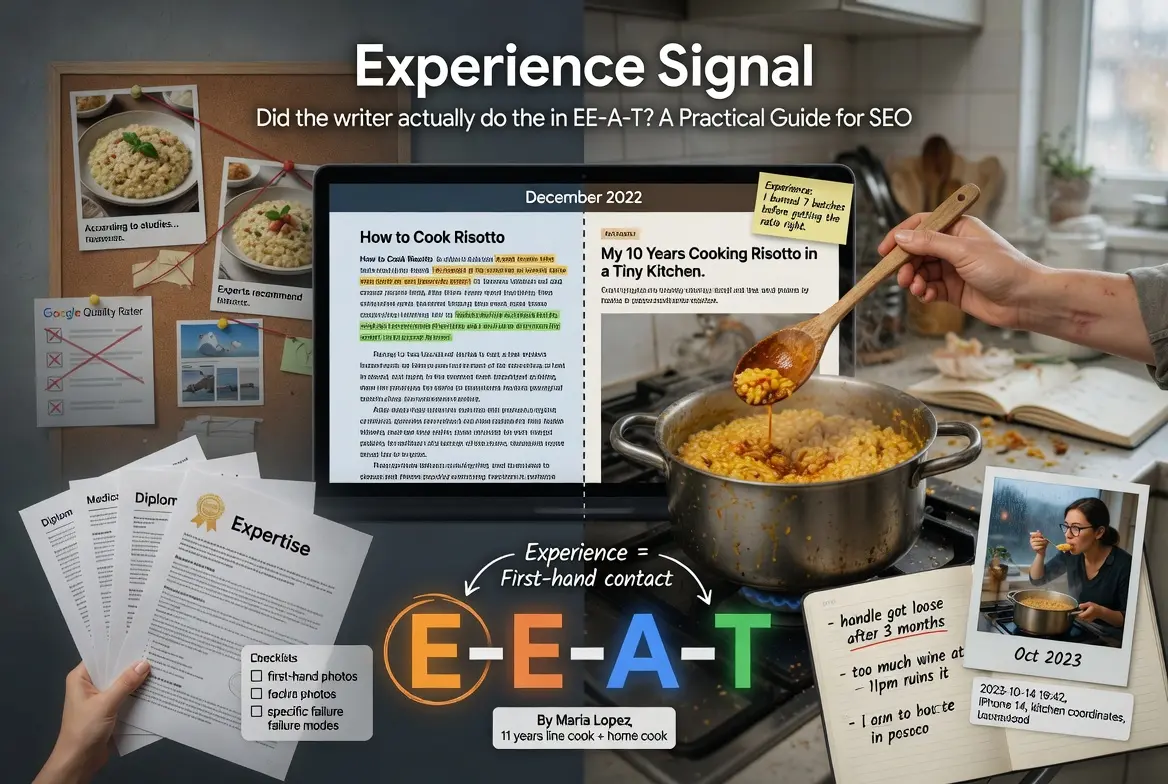

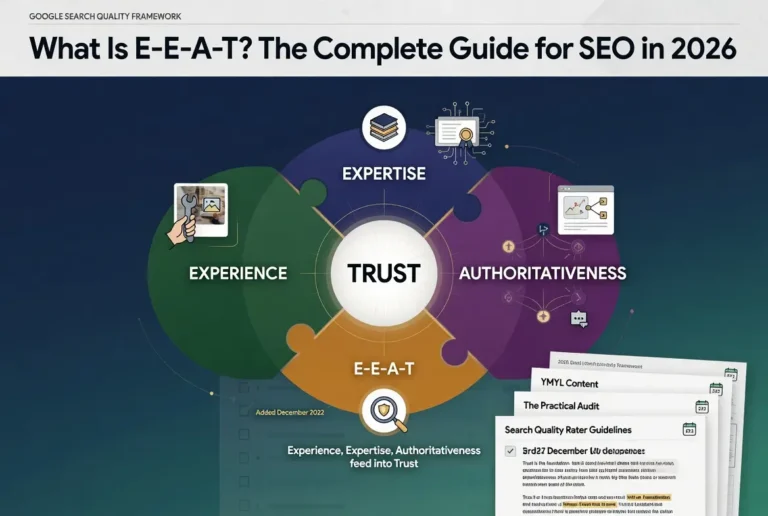

What is the Experience signal in E-E-A-T? Experience is the first letter of E-E-A-T, added to the framework in December 2022. It evaluates whether the writer has personally lived through the topic they are writing about, distinct from whether they have credentials about it.

What it is and who it is for: Experience is the first-hand contact dimension of Google’s quality framework. It matters most for content where the difference between someone who has done the thing and someone who has only researched it shapes whether the page is useful.

The rule: Experience is built through first-hand contact, not through credentials or research. A writer can have full Expertise without Experience, and a writer can have full Experience without formal Expertise. The framework treats them as separate signals.

Table of Contents

What Experience Means in E-E-A-T

Experience is the first letter of E-E-A-T and the newest addition to the framework. It was added in December 2022 to capture a quality the previous framing missed. The framework before that point recognized credentialed knowledge through Expertise but had no operational way to recognize first-hand contact with a topic as a separate signal.

The operational definition of Experience is whether the writer has personally lived through the topic they are writing about. The signal is observable in content through specific markers. First-person testing photographs that show the actual product or process being used. Anecdotes that include details only someone who had been there would include. Hedging that reflects the writer’s actual confidence rather than the confidence the writing position implies. The pattern of failure modes the writer expects to encounter, which only emerges from having encountered them.

The shorthand version: Experience is the question of whether the writer has done the thing, not just learned about the thing. The dimension is fundamentally about first-hand contact rather than research. A writer who has spent ten years cooking professionally writes about cooking with markers a researcher cannot fake. A writer who has lived with a chronic condition for fifteen years writes about it with markers a doctor without that experience cannot reproduce.

For the broader cluster context, the Pillar guide on E-E-A-T covers how Experience integrates with the other three signals. The sibling articles on Expertise, Authoritativeness, and Trust cover the other three letters.

Why Google Added the Second E in 2022

The addition of Experience to the framework was deliberate. Google announced the change in December 2022, and the reasoning was direct. The web had filled with credentialed writers reciting research they had not lived through, and the framework needed a way to recognize first-hand contact with the topic as distinct from credentialed knowledge about the topic.

The trigger was the rise of AI-generated content and the broader pattern of content written by researchers rather than practitioners. A board-certified cardiologist who had never seen a patient outside of training was being treated by the framework the same as a cardiologist with thirty years of clinical practice. A travel writer who had never visited a destination was being treated the same as a writer who had spent six months living there. The 2022 addition closed that gap by separating the two evaluations.

The framing in the official announcement was specific. Google’s quality raters were instructed to evaluate “the extent to which the content creator has the necessary first-hand or life experience for the topic.” The word that matters there is “necessary.” The framework recognizes that some topics genuinely require first-hand experience for the content to be reliable, and other topics can be covered competently by researchers. The evaluation adjusts to the topic.

The practical implication for any site is that the topical mix on the site shapes how heavily Experience is weighted. A site covering medical research can survive on Expertise without much Experience because the topic genuinely is research-based. A site covering product reviews fails on Experience if the reviewers have not actually used the products. The framework treats these as different evaluations.

Experience vs Expertise: The Critical Distinction

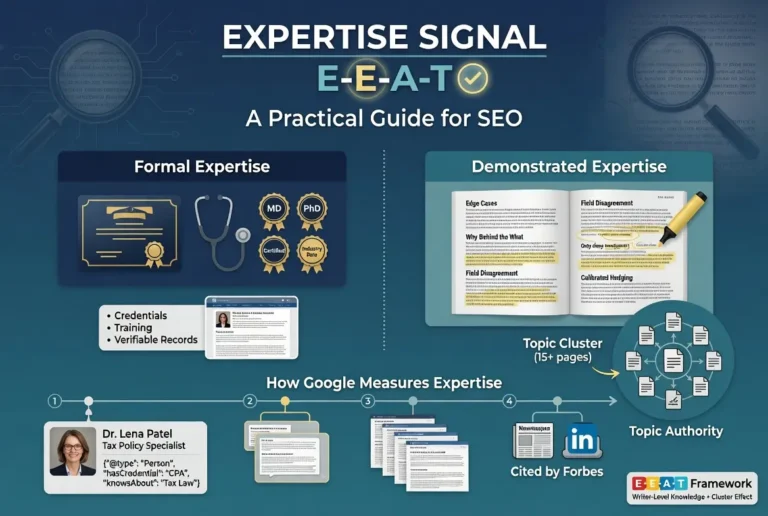

The distinction between Experience and Expertise is the one most discussions of E-E-A-T blur. Expertise is built through training, credentials, and demonstrated knowledge. Experience is built through first-hand contact. The two are related but not interchangeable, and the distinction is operationally important for any site trying to figure out where to invest.

The cardiology example clarifies the gap. A medical student who completes their training and begins writing patient education content has full Expertise from the moment they start. They have the credentials, the framework knowledge, and the demonstrated capacity to discuss the topic accurately. They do not yet have Experience. The Experience develops over the years of clinical practice, where they encounter the patterns that do not appear in textbooks and develop the calibrated confidence that comes from having been wrong and corrected by reality.

The reverse case is also instructive. A patient who has lived with a chronic condition for fifteen years has full Experience with the condition. They know the daily texture of it, the failure modes, the small things that work and the small things that do not. They do not have Expertise in the formal sense. The framework recognizes both as real, and a site that combines patient-Experience writing with credentialed-Expertise medical review produces a stronger profile than either signal alone.

The implication for content strategy is that Experience and Expertise should be developed in parallel rather than treated as substitutes. Sites that lean heavily on Experience without Expertise can be dismissed as anecdotal. Sites that lean heavily on Expertise without Experience can be dismissed as theoretical. The configurations that scale are the ones where both signals are present in proportion to the topic.

How Google Measures Experience

The same caveat that applies to the other three letters applies here. E-E-A-T is not a direct ranking factor. The Quality Rater Guidelines describe a framework used by human evaluators whose feedback trains the algorithms over time. Google’s systems cannot directly measure Experience the way a human rater can. They measure proxies for Experience.

The proxies break into three categories.

The first is content-level signals. First-person photographs that show the writer interacting with the topic. Anecdotes that include details only someone present would include, like the time of year, the equipment used, the unexpected thing that happened. Hedging patterns that match real first-hand experience, where the writer is more confident about specific things they encountered and less confident about general claims that go beyond their direct contact.

The second is metadata signals. Author bylines that link to verifiable real people with public records of having engaged with the topic. Schema markup that surfaces the author’s relationship to the subject. Image EXIF data and metadata that confirms photographs were taken at the time and place the content claims.

The third is site-level signals. Whether the site has a track record of substantive coverage of the topic over time, indicating sustained engagement. Whether the broader pattern of content suggests genuine interest and continued investment in the subject area, rather than scattered coverage that suggests superficial research.

The implication for operators is that all three categories matter and they reinforce each other. A site that has strong content-level Experience signals but no metadata or site-level signals produces a weaker profile than a site where all three categories align. The configurations that scale are parallel investment across all three.

Demonstrating Experience in Content

The practical question for any operator is how to surface Experience in content that genuinely has it. The work breaks into several specific patterns that the framework recognizes consistently.

First-person photographs are the strongest single signal. A photo of the writer using the actual product, in the actual setting, with the actual context that the content describes, is the kind of evidence that cannot be faked easily and that Google’s systems have grown more capable of identifying. Stock photography or generic product shots fail this signal. Original photographs that include incidental details — the writer’s hand, the workspace, the time of day — pass it.

Specific anecdotes are the second strongest signal. A writer who can describe a particular moment of using the product or engaging with the topic, with details that only emerge from having been there, signals first-hand contact more reliably than any general claim about experience could. The anecdote does not have to be central to the article. A single sentence about the time you noticed something specific is often enough.

Calibrated hedging is the third signal. Real Experience produces confidence about the things you have personally encountered and uncertainty about the things you have not. Writing that asserts equally across all topics, or hedges equally across all topics, signals that the writer’s confidence is not calibrated to first-hand contact. Writing that confidently asserts specific details and explicitly hedges on general claims signals genuine Experience.

Pattern of failure modes is the fourth signal. A writer with real Experience in a topic knows where things go wrong because they have watched them go wrong. Including the specific failure modes you have personally encountered, in the specific contexts where they emerge, signals a depth of contact that researchers without Experience usually miss.

For the broader content production discipline that supports Experience signals, the Content discipline covers the operational architecture.

How Experience Fails: Common Patterns

The most common Experience failures are predictable. They show up across new sites, established sites that have grown careless, and sites that have moved to AI-generated content without adjusting the production process to maintain Experience signals.

The first common failure is the stock photography problem. The article describes first-hand experience with a product or process, and the images are stock photos that anyone could license. The mismatch is detectable both by quality raters and by image analysis at the algorithmic level. The fix is straightforward. Take real photos of the actual subject the content describes.

The second common failure is the generic anecdote. The article includes a story about using the product, but the story has no specific details that anchor it to a real moment. “I used this for months and loved it” passes nothing. “I used this for the first three months of my morning routine and noticed the handle wore down faster than the manufacturer described” passes the signal because the detail is specific enough to anchor the claim in first-hand contact.

The third common failure is the calibration mismatch. The writer asserts confidence on every claim equally, including claims that go far beyond their first-hand contact with the topic. The pattern signals that the confidence is not calibrated to experience because real Experience produces variable confidence. The fix is to hedge explicitly on claims outside the writer’s direct experience and assert specifically on claims within it.

The fourth common failure is the AI-generated content without Experience grounding. AI content tends to produce smooth, consistent prose without the texture of first-hand contact. The patterns are increasingly identifiable because they lack the specific details, calibrated hedging, and failure-mode awareness that real Experience produces. The fix is not to avoid AI tools entirely, but to ensure that AI-generated content is paired with real Experience input that grounds the writing in first-hand contact.

The fifth common failure is the topic without genuine Experience. The site publishes content on topics where no one on the team has first-hand contact, and the writing reflects that gap. The fix is operational rather than tactical. Either bring in writers who have the relevant Experience, or focus the content on topics where the team genuinely has first-hand contact.

Building Experience From Zero

The hardest case for Experience is the new site or new writer with no prior track record on the topic. Several Experience signals depend on having actually engaged with the subject before writing about it, and that engagement cannot be shortcut. The question becomes how to build genuine Experience deliberately.

Stage one is the substantive engagement. Before writing about a topic at depth, the operator needs to have actually engaged with it. Used the products. Practiced the techniques. Spent time in the contexts the content describes. The investment is upfront and unglamorous, but without it the Experience signals will not develop because the underlying contact is absent.

Stage two is the documentation discipline. Real Experience generates artifacts as a byproduct of the engagement. Photographs taken during the work. Notes on what surprised you. Records of failure modes encountered. Operators who plan their Experience-building deliberately also plan to capture the artifacts that will surface in the content later.

Stage three is the production discipline. Surfacing Experience in writing is its own skill. Writers who have genuine Experience but produce generic content fail the framework regardless of how much first-hand contact they have. The production discipline is about translating the engagement into content markers that the framework recognizes — first-person photographs, specific anecdotes, calibrated hedging, failure-mode awareness.

Stage four is the patience. Experience-based content develops slower than research-based content because the engagement that grounds it cannot be rushed. Operators who succeed at Experience over multi-year time horizons are the ones who understood from the beginning that the work could not be shortcut and built the content cadence accordingly.

Verdict

Experience is the first letter of E-E-A-T and the newest addition to the framework. It evaluates whether the writer has personally lived through the topic they are writing about, distinct from whether they have credentials about it. The signal is built through first-hand contact rather than research, and it is operationally distinct from Expertise even though the two are often blurred in casual discussion.

The proxy signals Google’s systems use include content-level markers like first-person photographs and specific anecdotes, metadata signals like verifiable author bylines, and site-level signals like sustained engagement with topics over time. All three categories matter, and Experience profiles built on only one category are fragile.

The practical sequence for surfacing Experience in content is the discipline of using first-person photographs, including specific anecdotes that anchor claims in real moments, calibrating hedging to actual confidence, and surfacing the failure modes that only emerge from first-hand contact. Sites that hold this discipline produce content the framework recognizes consistently.

For the integration of Experience with the other three letters as a system, the Pillar piece ties them together. The sibling article on Expertise covers the second letter and the distinction between formal and demonstrated knowledge. The article on Authoritativeness covers the third letter and external recognition. The article on Trust covers the foundation Google has named the most important factor.

Frequently Asked Questions

What does Experience mean in E-E-A-T?

Experience refers to whether the writer has personally lived through the topic they are writing about. It is the first letter of E-E-A-T and was added to the framework in December 2022. The signal is built through first-hand contact rather than through credentials or research.

When did Google add Experience to E-E-A-T?

Google announced the addition of Experience to the framework in December 2022, expanding what had been E-A-T (Expertise, Authoritativeness, Trustworthiness) into E-E-A-T. The change was made to recognize first-hand contact with topics as a distinct quality signal separate from credentialed knowledge.

What is the difference between Experience and Expertise?

Experience is built through first-hand contact with a topic. Expertise is built through training, credentials, and demonstrated knowledge. A writer can have full Expertise without Experience, and a writer can have full Experience without formal Expertise. The framework treats them as separate signals because they come from different sources.

Is Experience a direct ranking factor?

No. E-E-A-T as a whole is not a direct ranking factor. It is a framework used by human Quality Raters whose feedback trains Google’s ranking systems over time. The proxy signals Google’s algorithms use to estimate Experience do influence rankings through other mechanisms.

How do I demonstrate Experience in my content?

The strongest signals are first-person photographs of the writer engaging with the actual subject, specific anecdotes that include details only someone present would include, calibrated hedging that reflects real confidence levels, and surfacing of failure modes that only emerge from first-hand contact.

Can AI-generated content pass the Experience signal?

AI-generated content struggles with Experience signals because it lacks the texture of first-hand contact. The patterns are increasingly identifiable because they lack specific details, calibrated hedging, and failure-mode awareness that real Experience produces. AI tools can be useful when paired with real Experience input that grounds the writing.

What is the most common Experience mistake?

The stock photography problem. Articles describe first-hand experience with a product or process, but the images are stock photos that anyone could license. The mismatch is detectable both by quality raters and by image analysis. The fix is to take real photos of the actual subject the content describes.

Does every topic require Experience signals?

No. Google’s framework recognizes that some topics genuinely require first-hand experience while others can be covered competently by researchers. The evaluation adjusts to the topic. Medical research content may pass on Expertise alone. Product review content fails without genuine Experience.