What Is the Expertise Signal in E-E-A-T? A Practical Guide for SEO

AI Summary

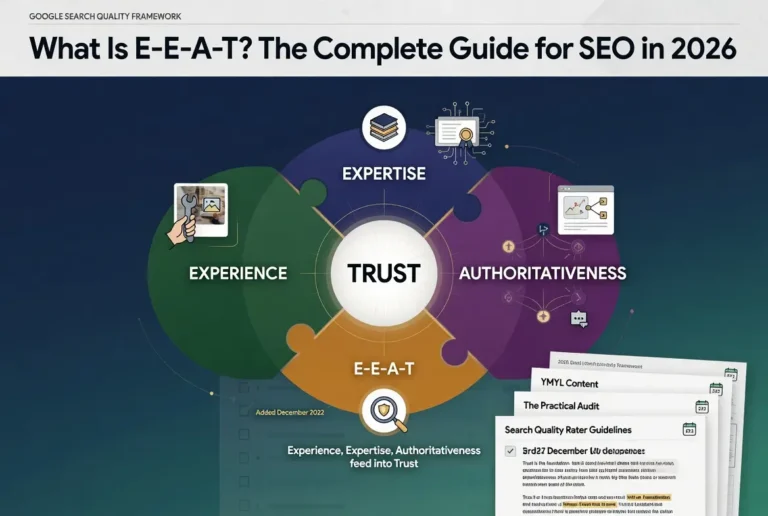

What is the Expertise signal in E-E-A-T? Expertise is the second letter of E-E-A-T. It evaluates whether the writer demonstrates the knowledge required to cover the topic accurately, through credentials, demonstrated knowledge in the writing itself, or both.

What it is and who it is for: Expertise is the knowledge dimension of Google’s quality framework. It matters most for content where the difference between someone who knows the topic deeply and someone who has only researched it shapes whether the page is reliable.

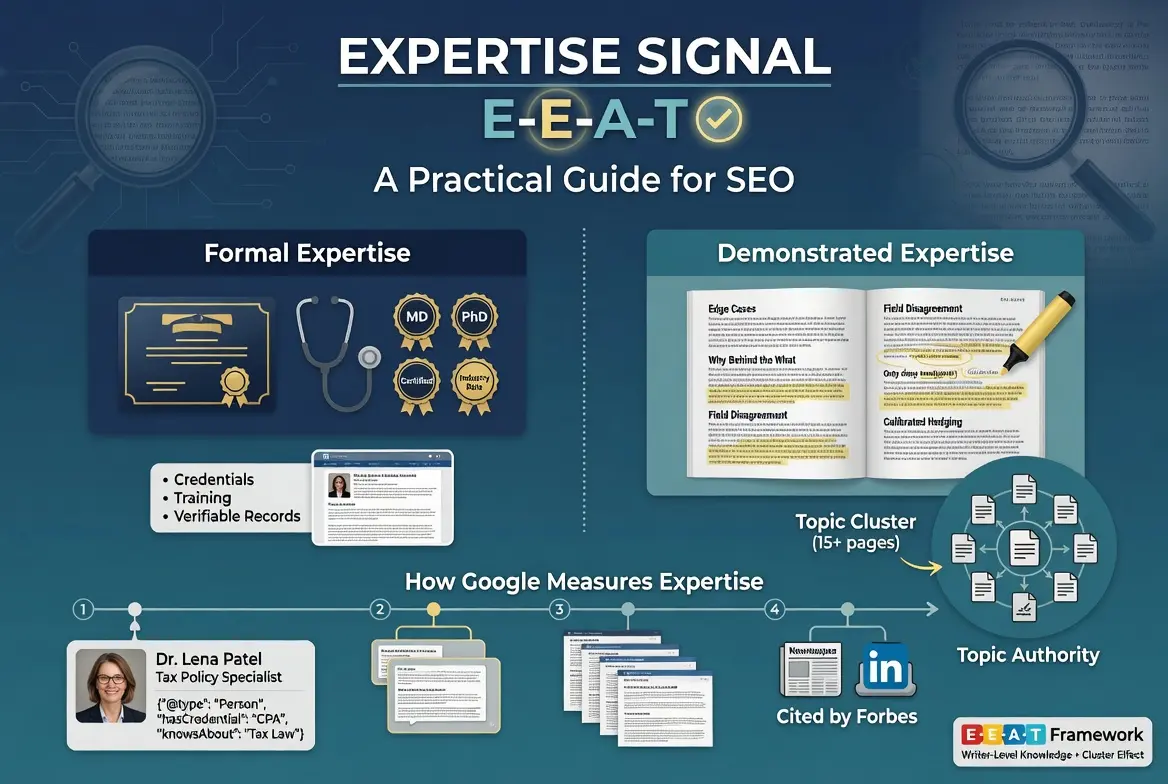

The rule: Expertise can be formal (credentials, training, certifications) or demonstrated (knowledge surfaced through writing that engages with edge cases, why behind the what, and substantive depth). The framework recognizes both, and the strongest profiles have both in proportion to the topic.

Table of Contents

What Expertise Means in E-E-A-T

Expertise is the second letter of E-E-A-T and the one most commonly misunderstood as being purely about formal credentials. Google’s framework recognizes both formal Expertise and demonstrated Expertise, and the framework treats them differently depending on the topic and the writing context.

The operational definition of Expertise is whether the writer demonstrates the knowledge required to cover the topic accurately. The signal lives at the writer level rather than at the site level, and it can be evaluated through credentials, through the writing itself, or through both. The framework’s framing in the Search Quality Rater Guidelines instructs raters to evaluate “the extent to which the content creator has the necessary knowledge or skill for the topic.”

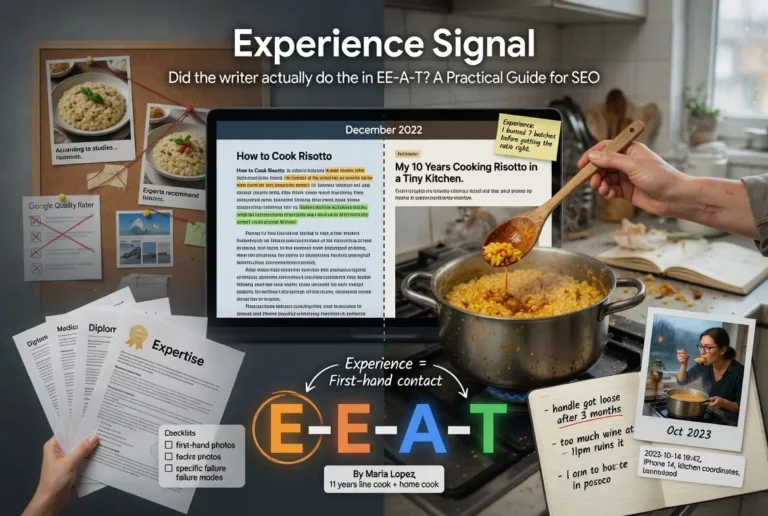

The shorthand version: Expertise is the question of whether the writer knows the topic, not whether they have lived through it. The dimension is fundamentally about knowledge rather than experience. A researcher who has spent years studying a subject without first-hand contact has Expertise. A practitioner who has lived through a subject without formal training has Experience. The strongest profiles combine both, and the framework treats them as separate signals because they come from different sources.

For the broader cluster context, the Pillar guide on E-E-A-T covers how Expertise integrates with the other three signals. The sibling article on Experience covers the distinction between Expertise and first-hand contact, and the articles on Authoritativeness and Trust cover the third and fourth letters.

Formal vs Demonstrated Expertise

The framework recognizes two forms of Expertise, and the distinction between them is operationally important for any site trying to figure out where to invest.

Formal Expertise is the credentials version. Medical degrees, professional certifications, academic appointments, recognized industry positions. The signal is verifiable through external records and is the easiest to evaluate because the credential either exists or it does not. The framework values formal Expertise, particularly for topics where formal training is the recognized path to competence.

Demonstrated Expertise is the writing-itself version. The content shows knowledge through how it handles edge cases, how it explains the why behind the what, how it engages with disagreement in the field, and how it surfaces details that only someone with deep working knowledge would surface. The signal is observable in the content directly and does not require external verification. The framework values demonstrated Expertise even when formal credentials are absent, particularly for topics where the recognized path to competence is not credentialing-based.

The two forms are not interchangeable, but they reinforce each other. A writer with formal credentials who cannot demonstrate the knowledge in their writing has a weaker Expertise profile than the credentials suggest. A writer with no formal credentials who demonstrates the knowledge consistently across substantive content has a real Expertise profile despite the absence of a credential. The strongest configurations have both, and the framework treats them as parallel inputs into the broader Expertise judgment.

The implication for content strategy is that operators should not assume formal credentials alone produce the Expertise signal. The credential opens the door. The writing has to walk through it. Sites that publish credentialed content where the writing fails to demonstrate the knowledge implied by the credentials produce profiles that look strong on paper but fail the framework when evaluated.

Topic Authority and the Cluster Effect

Expertise compounds at the topical level through what Google has described as Topic Authority. The mechanism is that a site demonstrating Expertise across a substantive cluster of related topics builds a stronger Expertise signal than a site demonstrating Expertise on a single page or a scattered handful of topics.

The reasoning is operational. A single page can be written by anyone who researched the topic adequately for one article. A cluster of fifteen substantive pages on related aspects of the same topic, each engaging with edge cases and demonstrating depth, signals sustained engagement that researchers without genuine knowledge struggle to produce.

The cluster effect connects directly to the broader concept of Authoritativeness, which is covered in the tier article on Authoritativeness. The two signals work together. Expertise builds at the writer level. Topic Authority builds at the site level through accumulated Expertise across content. Authoritativeness builds at the recognition level through external validation of the Expertise the site has demonstrated. Each layer reinforces the others.

The practical implication for cluster-based content strategy is that Expertise investment is most efficient when it concentrates on topics where the writer or site can build durable depth rather than scattered surface coverage. Twenty articles on five different topics produces less Expertise signal than twenty articles on one focused topic, because the cluster effect amplifies the signal in ways scattered coverage cannot.

For the structural side of how content depth supports Expertise, the Content discipline covers the production standard.

How Google Measures Expertise

The same caveat that applies to the other three letters applies here. E-E-A-T is not a direct ranking factor. The framework lives in the Quality Rater Guidelines and shapes the rater evaluations that train Google’s ranking systems over time. Google’s algorithms cannot directly measure Expertise the way a human rater can. They measure proxies for Expertise.

The proxies break into four categories.

The first is author-level signals. Named bylines that link to verifiable real people. Author bio pages that surface qualifications, training, and relevant experience. Schema markup that makes the author information machine-readable through the Person and Organization types. Cross-references to the author across other authoritative sites, which Google’s systems use to corroborate the claimed expertise.

The second is content-level signals. Engagement with edge cases that researchers without expertise typically miss. Explanation of the why behind the what, indicating understanding of underlying principles rather than surface knowledge. Engagement with disagreement in the field, which signals familiarity with the actual debate rather than the popularized version of it. Calibrated hedging where the writer is more confident about claims within their direct knowledge and less confident about claims at the edges.

The third is site-level signals. Topical depth across a substantive cluster of related content, indicating sustained engagement with the subject area. Consistency of authorship or editorial standard across the cluster, indicating a genuine knowledge base rather than scattered freelance contributions. Update patterns that maintain the cluster over time as the topic evolves.

The fourth is external corroboration signals. Citations from other authoritative sources in the topic area. Mentions in journalism that treat the writer or site as a credible source. Inclusion in industry conversations that recognized voices in the field also participate in. The corroboration signals connect Expertise to Authoritativeness, which is part of why the four letters reinforce each other in the official framework.

The implication for operators is that all four categories matter and they reinforce each other. A site with strong author-level Expertise but no site-level depth produces a weaker profile than a site where all four categories align.

Author Bios and Schema Markup

The author bio is where formal Expertise gets surfaced operationally, and Schema markup is where the bio information becomes machine-readable. Sites that publish substantive content under named bylines with verifiable credential links produce a stronger Expertise signal than sites that publish under generic admin accounts or hide the authorship layer entirely.

The baseline author bio includes several specific elements. The author’s full name, displayed prominently on every article they write. A bio paragraph that surfaces relevant qualifications, training, and experience for the topics they cover. A photograph of the actual person, which is itself a Trust signal that the byline represents a real human. Links to the author’s verifiable presence on platforms appropriate to the topic — academic profiles, professional registries, industry publications, social platforms where they have substantive engagement with the subject area.

The Schema.org Person type is the technical layer that makes the bio information machine-readable. The properties that matter most for Expertise signaling include name, jobTitle, alumniOf, hasCredential, sameAs (linking to authoritative profiles elsewhere on the web), and knowsAbout (specifying the topics the author is qualified to cover). The Credibility discipline covers the operational implementation of these schemas.

The implementation pattern that scales is the consistent application of author bylines and schema across every article on the site, not just the flagship pieces. Sites that surface authorship on flagship content but hide it on long-tail content produce inconsistent profiles that quality raters and algorithmic proxies both flag.

One detail that operators frequently miss. The Person schema’s sameAs property is the strongest cross-corroboration signal because it explicitly links the author entity to authoritative profiles on other recognized platforms. Linking the author to their LinkedIn, their academic profile, their Wikipedia entry where one exists, their published books on platforms like Amazon, all create an entity graph that Google’s systems use to verify the claimed Expertise.

How Expertise Fails: Common Patterns

The most common Expertise failures are predictable. They show up across new sites, established sites that have grown careless about the authorship layer, and sites that have moved to AI-generated content without preserving the human Expertise grounding.

The first common failure is the missing or generic byline. The site publishes under “admin,” “editor,” or no byline at all. The Expertise signal at the author level is absent because there is no author the framework can evaluate. The fix is operational. Every substantive article needs a named byline that links to a real bio page.

The second common failure is the credentialed bio without demonstrated knowledge. The author has impressive credentials in the bio, but the writing itself does not surface the knowledge those credentials imply. Generic explanations of concepts the credential should give the author depth on. Surface coverage of debates the credential should give the author position on. The mismatch is detectable both by quality raters and by content-level analysis.

The third common failure is the topic-credential mismatch. The author has formal credentials, but the credentials are in a different field than the topic being covered. A nutritionist writing about taxation does not produce Expertise signals on tax topics regardless of how strong their nutrition credentials are. The framework evaluates Expertise relative to the topic, not in absolute terms.

The fourth common failure is the AI-generated content without Expertise grounding. AI tools can produce content that mimics surface features of expertise — vocabulary, structure, the appearance of engagement with the topic — without the underlying knowledge that makes the writing reliable. The patterns are increasingly identifiable because AI-generated content struggles with edge cases, fails to engage substantively with disagreement, and produces calibration that does not match real expertise.

The fifth common failure is the topical scatter. The site publishes across too many unrelated topics for any single author or editorial team to genuinely have Expertise across all of them. The pattern signals freelance content production rather than genuine knowledge base, and the cluster effect that amplifies Expertise signals does not apply because there is no cluster.

Building Expertise Surface Area

The hardest case for Expertise is the new site or new writer with no prior public track record on the topic. Expertise signals build over time through accumulated content and accumulated external corroboration, and the trajectory cannot be shortcut. The question becomes how to build the surface area that earns Expertise recognition deliberately.

The work breaks into four stages.

Stage one is the foundation. Decide which topics the writer or site genuinely has Expertise in, and stop publishing in topics outside that scope. The narrowing is the hardest part for new sites because the temptation is to chase volume. Operators who hold the discipline produce Expertise profiles that scale. Operators who scatter across unrelated topics produce profiles that fail the framework regardless of how much content they publish.

Stage two is the cluster construction. Build out a substantive cluster of related content within the chosen topic, with each piece engaging with edge cases and demonstrating depth. The cluster effect amplifies Expertise signals once it reaches enough mass to signal sustained engagement, and the threshold is lower than most operators assume. Ten substantive pieces on a focused topic outperform fifty scattered pieces on unrelated topics.

Stage three is the corroboration layer. As the cluster matures, build the external corroboration that the framework recognizes. Citations from other authoritative sources in the topic area. Mentions in journalism. Inclusion in industry conversations. Schema markup that links the author entity to authoritative profiles elsewhere on the web. The corroboration signals connect Expertise to Authoritativeness and produce the compound effect that mature Expertise profiles depend on.

Stage four is the maintenance. Expertise erodes when content stops being updated as the topic evolves. The discipline of keeping the cluster current is what separates Expertise profiles that scale from profiles that decay. Operators who treat content as a one-time investment produce decaying Expertise. Operators who treat content as ongoing maintenance produce Expertise that compounds.

Verdict

Expertise is the second letter of E-E-A-T and the dimension that asks whether the writer demonstrates the knowledge required to cover the topic accurately. The framework recognizes both formal Expertise through credentials and demonstrated Expertise through the writing itself, and the strongest profiles have both in proportion to the topic.

The proxy signals Google’s systems use include author-level signals through bylines and schema markup, content-level signals through engagement with edge cases and calibrated hedging, site-level signals through topical depth and cluster construction, and external corroboration through citations and mentions in authoritative coverage. All four reinforce each other, and Expertise built on only one category is fragile.

The cluster effect is the operationally important part for content strategy. Twenty pieces on one focused topic outperform fifty pieces scattered across unrelated topics because the framework amplifies Expertise signals when they cluster around sustained engagement with a subject area. Sites that hold the discipline of topical focus produce Expertise profiles that scale.

The practical sequence for building Expertise from zero is the four-stage progression. Choose the topics where genuine Expertise exists. Build the substantive content cluster. Layer in the external corroboration as the cluster matures. Hold the maintenance discipline as a permanent commitment.

For the integration of Expertise with the other three letters as a system, the Pillar piece ties them together. The sibling articles on Experience, Authoritativeness, and Trust cover the other three signals.

Frequently Asked Questions

What does Expertise mean in E-E-A-T?

Expertise refers to whether the writer demonstrates the knowledge required to cover the topic accurately. It is the second letter of E-E-A-T and can be built through formal credentials, demonstrated knowledge in the writing itself, or both. The framework treats Expertise as a writer-level signal that compounds with site-level Topic Authority over time.

What is the difference between formal and demonstrated Expertise?

Formal Expertise is built through credentials, training, and certifications that are verifiable through external records. Demonstrated Expertise is built through writing that engages with edge cases, explains the why behind the what, and surfaces details only someone with deep knowledge would surface. The framework recognizes both, and the strongest profiles have both in proportion to the topic.

Is Expertise a direct ranking factor?

No. E-E-A-T as a whole is not a direct ranking factor. It is a framework used by human Quality Raters whose feedback trains Google’s ranking systems over time. The proxy signals Google’s algorithms use to estimate Expertise do influence rankings through other mechanisms.

How does Topic Authority relate to Expertise?

Topic Authority is the site-level signal that emerges when a site demonstrates Expertise across a substantive cluster of related content. The cluster effect amplifies Expertise signals because sustained engagement with a focused topic signals knowledge that scattered coverage cannot produce. Twenty pieces on one focused topic outperform fifty pieces across unrelated topics.

What schema markup helps demonstrate Expertise?

The Schema.org Person type with properties including name, jobTitle, alumniOf, hasCredential, sameAs (linking to authoritative profiles elsewhere on the web), and knowsAbout (specifying the topics the author is qualified to cover). The sameAs property is particularly important because it links the author entity to authoritative profiles that Google’s systems use for cross-corroboration.

Can a site have Expertise without named bylines?

The Expertise signal at the author level is weaker without named bylines because there is no author the framework can evaluate. Sites that publish under generic accounts or hide authorship produce profiles that fail one of the primary Expertise signals regardless of the underlying knowledge of the writers.

What is the most common Expertise mistake?

The credentialed bio without demonstrated knowledge. Authors have impressive credentials in their bio, but the writing itself does not surface the knowledge those credentials imply. The mismatch is detectable both by quality raters and by content-level analysis. The fix is to ensure the writing engages substantively with the topic at the depth the credentials suggest.

Can AI-generated content pass the Expertise signal?

AI-generated content struggles with Expertise signals because it lacks the texture of genuine knowledge. The patterns are increasingly identifiable. AI content fails on edge cases, struggles with substantive engagement with disagreement in the field, and produces calibration that does not match real expertise. AI tools can be useful when paired with real Expertise input that grounds the writing.