The AI Content Editorial Layer: Turning Drafts Into Publishable Articles

AI Summary

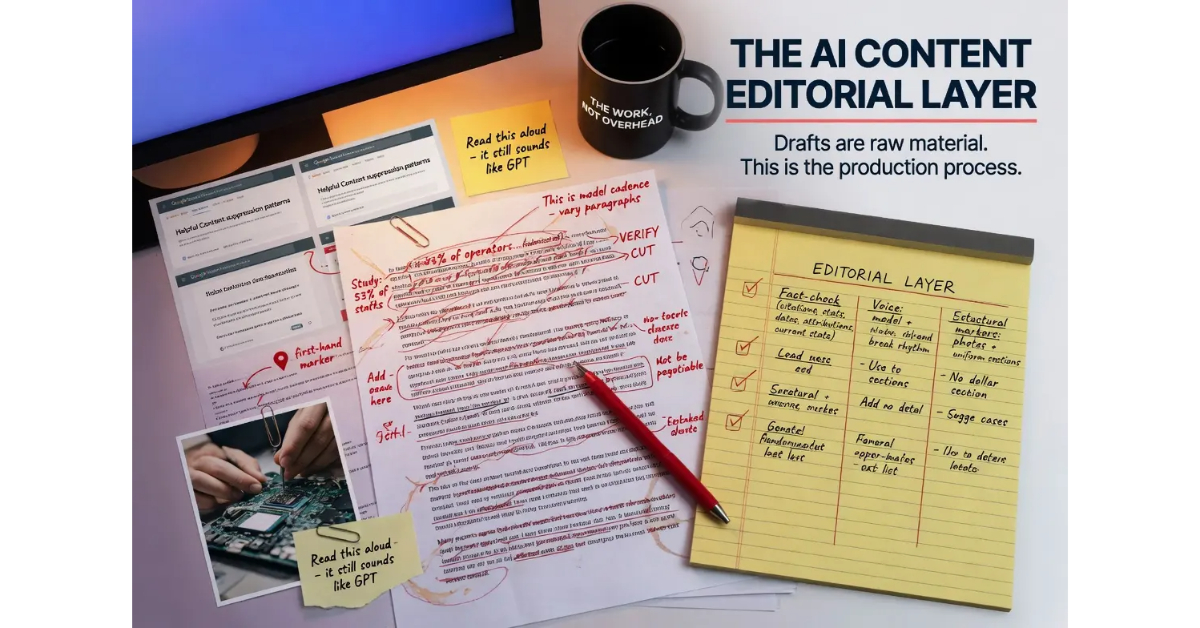

What is the AI content editorial layer? The editorial layer is the operational discipline applied to AI drafts to turn them into publishable content. The layer covers fact-checking, voice calibration, structural editing, and the addition of first-hand experience markers.

What it is and who it is for: The editorial layer matters for any operator using AI for content production. The layer is what separates content that ranks in competitive contexts from content that gets suppressed by the helpful content framework.

The rule: The editorial layer is not optional. Operators who skip the layer publish AI fingerprint output at scale, accumulate inaccuracy across the content base, and trigger the suppression patterns the helpful content framework was designed to produce.

Table of Contents

Why the Editorial Layer Exists

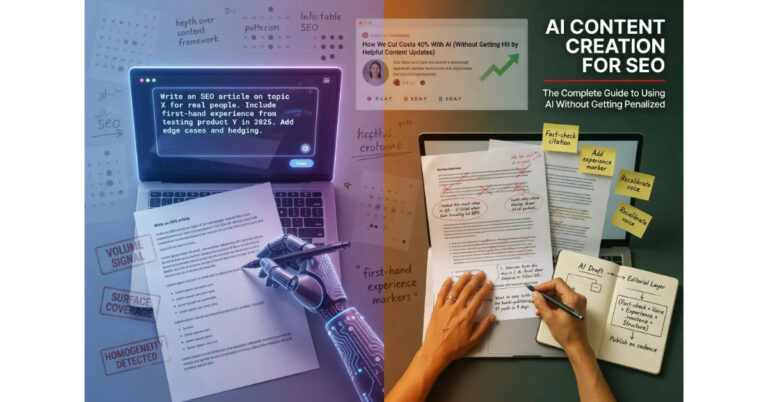

The editorial layer exists because AI drafts are not finished content. The drafts are starting material that requires human judgment to turn into publishable articles, and the judgment cannot be automated because the dimensions the layer addresses are dimensions models cannot evaluate from inside their own output. Operators who treat drafts as finished products produce content that fails on the dimensions that determine ranking outcomes, and the failure is operational rather than philosophical.

The four dimensions the layer covers are fact-checking, voice calibration, structural editing, and the addition of first-hand experience markers. Each dimension addresses a specific category of failure mode that AI drafts exhibit by default, and skipping any of the four produces predictable degradation in the published output. The four dimensions are not independent. They reinforce each other, and the layer works as a system rather than as a checklist of separable tasks.

The honest read on the editorial layer is that it takes substantial time, particularly when operators first start applying it. The time decreases as operators build the muscle for the work, but the layer never reduces to zero time investment. Operators who frame the time investment as overhead miss the framing that actually maps onto the work. The time is not overhead. The time is the work. The drafting is what enables the editorial layer to scale; the editorial layer is what produces ranking content.

The shorthand version: drafts are raw material, and the editorial layer is the production process that turns raw material into product. Operators who skip the production process publish raw material and treat it as product, which is the failure mode most AI content publishing exhibits.

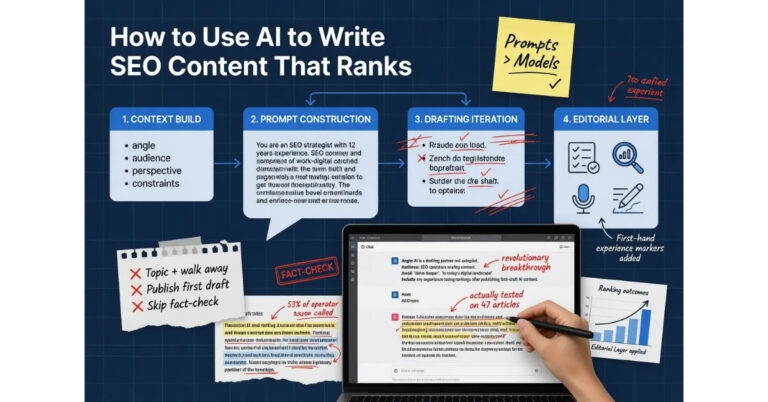

For the broader context on how the editorial layer fits into AI content creation, the Pillar guide on AI Content Creation covers the full framework. The sibling articles on How to Use AI to Write SEO Content, AI Content vs Human Content, and AI Content at Scale cover the other dimensions.

Fact-Checking the Draft

Fact-checking is the dimension where the consequences of skipping the work are most severe. Models confidently generate claims at confidence levels that are uncorrelated with accuracy, and operators who publish AI drafts without verification accumulate inaccuracy across the content base. The inaccuracy degrades the site’s Trust signal over time, and the degradation compounds as more articles get published with the same lack of verification.

The fact-checking workflow that scales has five categories of claims to verify. The first category is citations. Models routinely generate references to studies, papers, and authorities that do not exist or that the model has misattributed. The fabrications read plausible because the model knows what citations should look like, and operators who do not verify accept fabricated citations as real. The verification step is to check every citation against the actual source. If the source does not exist or does not contain the cited claim, the citation gets cut.

The second category is statistics. Models generate specific numbers with confidence even when the actual numbers are different from what the model produced. A claim that “fifty-three percent of operators report” something is fabricated if no actual study reported that number, regardless of how plausible the fifty-three percent figure sounds. The verification step is to check every specific statistic against an actual source. Statistics that cannot be verified get either replaced with verified figures or rewritten as general framing.

The third category is attributions. Models attribute quotes, frameworks, and positions to people who did not say or hold them. The attributions read plausible because the model knows the people exist and writes about adjacent topics. The verification step is to check every attribution against the actual source where the attributed material appeared. Attributions that cannot be verified get cut.

The fourth category is dates. Models generate specific dates for events, product releases, policy changes, and developments, and the dates are sometimes wrong. The verification step is to check every specific date against authoritative sources. Wrong dates get corrected; dates that cannot be verified get either replaced with verified dates or rewritten as general framing.

The fifth category is current state claims. Models trained on data with a knowledge cutoff produce confident statements about the present that may have changed since the cutoff. Google’s policies, platform features, industry developments, product specifications, and many other categories of information change frequently enough that confident model output about current state needs verification against current sources. The verification step is the standard one: check the claim, correct the wrong claims, rewrite the unverifiable claims as general framing.

The fact-checking workflow that scales is to keep a running list of every specific claim the article makes that requires verification, work through the list before publishing, and either verify each claim or modify the article so the claim is no longer present. The discipline takes time, and the time is non-negotiable for content that needs to maintain Trust signal over an extended publishing horizon.

For more on the broader credibility infrastructure that supports the fact-checking discipline, the Credibility discipline covers the operational architecture.

Voice Calibration in Depth

Voice calibration is the dimension that determines whether the published article reads as the operator’s writing or as generic model output. The calibration is not cosmetic, despite operators sometimes dismissing it that way. The voice patterns that mark AI output are exactly the patterns Google’s systems and human readers both use to identify content that was produced rather than written, and the patterns are detectable at the article level and at the site level.

The default model voice has several recognizable features that the calibration breaks. The cadence falls into predictable patterns where every paragraph runs roughly the same length. The vocabulary leans on a small set of transition phrases that appear repeatedly across articles. The sentence structure alternates between short and long in ways that feel smooth on first pass and templated on second. The tone is uniformly even regardless of what the content is about. The rhythm is metronomic where natural writing varies based on what the content needs.

The calibration work breaks each of these patterns. The operator varies paragraph lengths to match how the operator actually writes. Some paragraphs run two sentences. Some run nine. The variation is not arbitrary; it reflects the substance of what each paragraph needs to convey. The operator swaps the model’s default transitions for the specific phrases the operator uses. The substitution is not blanket replacement; it is the natural consequence of reading the draft as if the operator had written it and rewriting the parts that read wrong.

The operator restructures sentences to break the alternating short-long pattern. Sometimes the operator includes deliberate fragments. Sometimes longer constructions that the model would not have generated. The variation produces rhythm that matches how the operator’s writing actually flows rather than how the model’s default flow patterns suggest writing should flow.

The operator adjusts tone where the content calls for stronger or softer treatment than the default even tone provides. Some sections need to be sharper. Some sections need to be more conversational. The default model output produces uniform tone regardless of the content needs, and the calibration restores the tonal variation that mature writing requires.

The technique that makes calibration efficient is reading the draft aloud. Sections that read as the operator’s actual voice can stand. Sections that read as generic AI output need rewriting. The aloud reading catches the cadence problems that silent reading misses, because the rhythmic patterns become audible in ways they are not visible on the page.

The other technique is comparing the draft to a sample of the operator’s actual writing on a similar topic. The comparison surfaces the specific patterns that diverge between the operator’s voice and the model’s default. The patterns identified in the comparison tell the operator what needs to change in the draft, and the changes accumulate into a calibration discipline that scales across multiple articles.

The honest read on voice calibration is that it takes substantial time the first few articles an operator calibrates, and the time decreases as the operator builds the muscle for the work. Operators who put the time in early build a calibration discipline that scales. Operators who skip the work produce content that signals AI fingerprint to anyone who reads more than two articles from the site.

Adding First-Hand Experience Markers

First-hand experience markers are the dimension models cannot produce because models do not have first-hand experience with the topics they write about. The markers are exactly the dimension the helpful content framework evaluates as Experience, and content that lacks them fails the framework regardless of how substantive the topic coverage is at the surface level.

The categories of markers operators add to AI drafts break across several patterns. The first category is photographs and visual evidence. A product review benefits from photographs of the product being used. A how-to guide benefits from images of the process in progress. A case study benefits from screenshots of the actual data. The visual evidence cannot be fabricated by AI because the operator has the photographs and the model does not, and the presence of authentic visual evidence is a strong signal of first-hand contact with the topic.

The second category is anecdotes that include details only someone present would include. The vehicle the operator was driving when they noticed the pattern. The time of day when the install went sideways. The specific phrase a coworker said that crystallized the insight. These details cannot be generated by models because the details are not on the public record. They exist only in the operator’s memory of actual events, and their presence in the writing signals to readers and to algorithmic detection that the writing emerged from genuine experience.

The third category is hedging that reflects the operator’s actual confidence level rather than the confidence the writing position implies. AI drafts default to confident phrasing across all claims because the model treats every claim as similarly credible. Operators have different confidence levels on different claims, and authentic hedging surfaces those differences. “I’m pretty sure this is right but I have not tested it on the newer firmware” reads differently from confident assertion, and the difference is the marker of someone whose knowledge is calibrated against actual experience.

The fourth category is engagement with edge cases the model would not have surfaced. Models cover topics at the surface level the model’s training data emphasizes, which means the model misses the unusual situations that operators with field experience encounter. The edge cases the operator adds in the editorial layer are often the most valuable content in the article because they are the parts readers cannot find in the generic coverage that fills the SERP.

The fifth category is changed positions with specifics. Operators who have spent years working with a topic have held positions that they later revised based on new evidence or experience. The revision history is a marker of genuine engagement with the topic over time, and AI drafts cannot generate revision history because the model has no positions to revise. Adding “I used to think X, then I saw Y, now I think Z” surfaces the kind of intellectual history that mature expertise produces.

The sixth category is self-implication when the topic permits. If the topic involves a systemic problem, including a moment where the operator was part of the problem rather than always being on the right side of it produces credibility that hagiographic positioning cannot. The self-implication has to be guilt-framed (behavior-focused, names the act, moves forward) rather than shame-framed (self-focused, lingers). Shame reads as impression management. Guilt reads as accountability.

The implementation pattern is to identify sections of the draft where experience markers would naturally fit and add them in the editorial layer. The markers should distribute across the article rather than concentrating in one section, and the markers should match the actual substance of the operator’s experience rather than being fabricated to satisfy the requirement. Fabricated experience markers fail worse than missing markers because they introduce a specific kind of inauthenticity that detection tools and attentive readers both catch.

Structural Editing

Structural editing is the dimension that addresses how the article is organized rather than what the article says. Models produce drafts that follow predictable structural patterns, and the patterns are detectable at the site level when many articles exhibit the same structure. The structural editing breaks the patterns and produces articles that read as deliberately organized rather than as templated output.

The first structural pattern that needs editing is the generic opening framing. Models default to opening framings like “In today’s world” or “When it comes to” or “Many people wonder about” that signal generic content from the first sentence. The editorial fix is to replace generic openings with specific ones that establish the article’s actual angle from the start. The replacement is usually a cut and rewrite rather than a tweak, because the generic opening typically does not contain anything worth keeping.

The second structural pattern is the listicle-when-prose-would-serve-better tendency. Models default to bulleted lists for content that would read better as prose, particularly when the content has logical connections between items that bullets break. The editorial fix is to convert lists into prose in the sections where prose serves better, while keeping lists in the sections where the content is genuinely enumerative.

The third structural pattern is the conclusion that summarizes what was already said rather than offering synthesis. Models default to summary conclusions because summary is a structural pattern the training data shows frequently. The editorial fix is to either rewrite the conclusion to offer synthesis or to cut the conclusion entirely and end on the article’s last substantive point. Endings that summarize add no value beyond the work of reading them.

The fourth structural pattern is the section length uniformity. Models produce sections of roughly equal length because the training data biases toward balanced structure. The editorial fix is to vary section length to match what each section actually requires. Some sections need two paragraphs. Some sections need eight. The variation reflects investment rather than balance, and the variation is itself a signal that the article was deliberately organized rather than templated.

The fifth structural pattern is the smooth transition between every section. Models default to transitions that connect every section logically and explicitly. The editorial fix is to allow some transitions to be abrupt, associative, or connected through experience rather than logic. Mature writing transitions associatively at least some of the time, and consistent logical flow is one of the patterns detection tools use to identify model output.

The sixth structural pattern is the predictable paragraph architecture within sections. Models default to [statement, explanation, personal note] or similar predictable structures that repeat across sections. The editorial fix is to vary the paragraph architecture, including sometimes opening with anecdote, sometimes ending on technical point, sometimes dropping experience mid-explanation. The variation is what mature writing actually does, and the predictable architecture is one of the patterns detection tools and human readers both notice.

The implementation pattern for structural editing is to read the draft as a critic and identify which structural patterns it exhibits. Then make targeted changes that break the patterns while preserving the substantive content. The changes are usually substantial. Operators who treat structural editing as light revision miss the depth of the work that the dimension actually requires.

Workflow Integration at Scale

The editorial layer integrates into the broader content workflow as the stage that follows drafting and precedes publishing. The integration matters operationally because the layer cannot be skipped without consequences and cannot be applied superficially without producing the same consequences as skipping it. The workflow integration is what makes the layer sustainable across multiple articles.

The first integration pattern is to treat the editorial layer as a non-negotiable stage in the publishing workflow. Articles do not move from drafted to published without passing through the editorial stage. The stage gate prevents the temptation to skip the layer when time pressure is high or when the draft seems good enough on first read. The stage gate is what scales discipline across multiple articles.

The second integration pattern is to allocate appropriate time for the layer. Operators who allocate fifteen minutes for editorial review on a three-thousand-word article have allocated insufficient time. The fact-checking alone usually takes longer than fifteen minutes for a substantive long-form piece. The realistic time allocation is closer to the time that would be required to write the article from scratch without AI assistance, possibly slightly less for operators who have built calibration muscle.

The third integration pattern is to maintain a running checklist of editorial layer dimensions to ensure none get skipped. The checklist covers fact-checking by claim category, voice calibration by reading aloud and comparison technique, experience marker integration by category, and structural editing by pattern. Operators who use the checklist apply the layer consistently. Operators who rely on memory skip dimensions when fatigue or time pressure increases, which is exactly when consistency matters most.

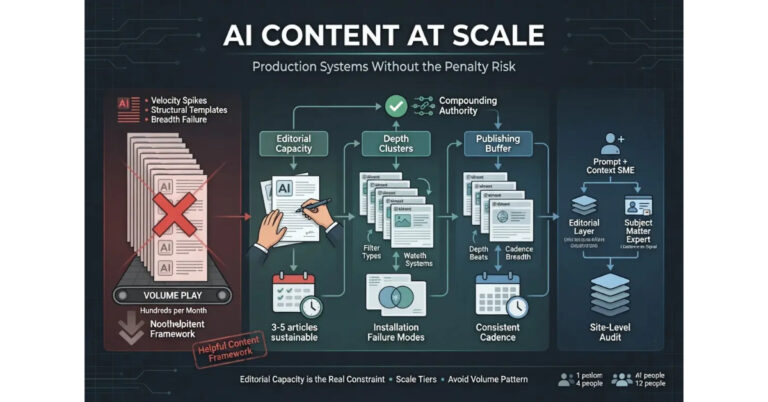

The fourth integration pattern is to scale editorial capacity alongside drafting capacity. Teams that scale AI drafting without scaling editorial capacity end up with content that exhibits AI fingerprints across the site, and the fingerprints aggregate into a site-wide signal that triggers suppression. The scaling is constrained by editorial capacity, and operators who scale AI drafting beyond their editorial capacity have built a system that produces failure mode content at higher volume.

The fifth integration pattern is the periodic site-level audit of published content. The audit samples articles across the site and reads them as a reader would, identifying which editorial layer dimensions are present in the aggregate signal and which are weak or missing. The audit catches calibration drift that develops over time and surfaces patterns that compound across multiple articles. The audit is part of the broader content maintenance work that healthy operations incorporate into their ongoing process.

For the deeper operational treatment of how the editorial layer scales without sliding into the volume play that fails the framework, the tier article on AI Content at Scale covers the full guide.

Common Editorial Layer Mistakes

The most common mistakes operators make with the editorial layer are predictable. They show up across new operators learning the work, established operators who have grown careless about the discipline, and team operations that have not built workflow integration around the layer.

The first common mistake is the surface read. Operators read the draft once, do light copyediting, and publish. The surface read catches obvious typos and grammar issues but misses the dimensions the editorial layer is designed to address. The fix is to apply the four-dimension framework deliberately rather than relying on a single read-through to surface issues.

The second common mistake is the fact-checking skip. Operators trust the model’s confidence as a proxy for accuracy and publish unverified claims. The skip is particularly common on articles where the operator has expertise in the topic and assumes the model will produce accurate claims because the operator could have produced accurate claims. The assumption is wrong. The fix is to verify every specific claim regardless of how confident the writing sounds.

The third common mistake is the cosmetic voice edit. Operators recognize that the draft sounds AI-produced and make light surface edits without addressing the deeper rhythm and structure patterns that produce the AI fingerprint. The edits change individual words without changing the underlying patterns, which leaves the fingerprint in place while creating the illusion that calibration happened. The fix is to apply the depth voice calibration covered earlier in this article.

The fourth common mistake is the missing experience markers. Operators publish articles that lack first-hand experience markers because adding them takes effort and the operator did not allocate time for the addition. The articles read as competent third-person knowledge of the topic and signal that the writing did not emerge from genuine experience. The fix is the deliberate addition of markers in the editorial layer rather than treating them as optional.

The fifth common mistake is the structural neglect. Operators publish articles with the model’s default structural patterns intact because addressing structure requires substantial rewriting. The published articles exhibit the structural homogeneity that aggregates into a site-wide signal of templated production. The fix is the deliberate structural editing covered earlier in this article.

The sixth common mistake is the time-pressure skip. Operators apply the editorial layer consistently when time allows and skip it under pressure. The inconsistency means the site exhibits articles with varying levels of editorial polish, and the variance itself is a signal that production is uneven. The fix is to treat the layer as non-negotiable rather than as variable based on available time, which usually means scaling drafting volume down to match editorial capacity rather than scaling editorial down to match drafting volume.

The seventh common mistake is the assumption that the next article will fix the pattern. Operators publish a substandard article assuming they will apply the editorial layer more carefully on the next one. The published article remains in the content base and contributes to site-wide signals indefinitely. The fix is to treat each published article as permanently part of the site’s signal profile rather than as a temporary state that can be improved later.

Verdict

The editorial layer is the operational discipline applied to AI drafts to turn them into publishable content. The layer covers four dimensions: fact-checking, voice calibration, structural editing, and the addition of first-hand experience markers. All four dimensions are necessary. Skipping any of them produces predictable degradation in the published output.

Fact-checking covers five categories of claims that AI drafts confidently generate at confidence levels uncorrelated with accuracy. Citations, statistics, attributions, dates, and current state claims all require verification before publishing. The discipline takes time, and the time is non-negotiable for content that needs to maintain Trust signal over an extended publishing horizon.

Voice calibration breaks the default model patterns that mark AI output. The patterns include cadence uniformity, transition phrase repetition, alternating sentence structure, uniform tone, and metronomic rhythm. The calibration produces variation that matches how the operator actually writes rather than how the model defaults. The technique that makes calibration efficient is reading drafts aloud and comparing to samples of the operator’s actual writing.

Experience markers are the dimension models cannot produce because models do not have first-hand experience. Photographs, anecdotes with details only someone present would include, calibrated hedging, edge case engagement, changed positions with specifics, and self-implication when the topic permits all need to be added in the editorial layer. The markers cannot be fabricated; they have to come from the operator’s actual contact with the subject.

Structural editing addresses how articles are organized. Generic opening framings, listicle-when-prose-would-serve patterns, summary conclusions, section length uniformity, smooth transitions everywhere, and predictable paragraph architecture all need targeted edits to break the templated output that models produce by default.

The workflow integration is what makes the layer sustainable across multiple articles. The layer functions as a non-negotiable stage gate, with appropriate time allocation, a running checklist, editorial capacity scaled alongside drafting capacity, and periodic site-level audits to catch drift. Operators who integrate the layer into their workflow scale sustainably. Operators who treat the layer as variable based on available time produce inconsistent content that signals uneven production.

For the broader framework that ties this layer together with the rest of the AI content creation discipline, the Pillar guide covers the full system. The sibling article on How to Use AI to Write SEO Content covers the workflow that produces drafts the editorial layer can work with. The article on AI Content vs Human Content covers the framework that explains why the editorial layer is what determines outcomes regardless of production method. The article on AI Content at Scale covers the production architecture that lets the editorial layer scale across larger operations without sliding into the volume play that fails the framework.

Frequently Asked Questions

What is the AI content editorial layer?

The editorial layer is the operational discipline applied to AI drafts to turn them into publishable content. It covers four dimensions: fact-checking, voice calibration, structural editing, and the addition of first-hand experience markers. The layer is what separates AI content that ranks from AI content that gets suppressed by the helpful content framework.

How long does the editorial layer take?

The realistic time allocation is closer to the time that would be required to write the article from scratch without AI assistance, possibly slightly less for operators who have built calibration muscle. Operators who allocate fifteen minutes for editorial review on a substantive long-form piece have allocated insufficient time. Fact-checking alone usually exceeds that.

What does fact-checking AI drafts involve?

Verification of five categories of claims: citations (do the cited sources exist and contain the cited claims), statistics (are the specific numbers accurate), attributions (did the named people actually say or hold the attributed positions), dates (are the specific dates correct), and current state claims (is the information about present circumstances still accurate given the model’s knowledge cutoff).

How do I calibrate AI voice to my own writing?

Read drafts aloud to catch the cadence problems silent reading misses. Compare drafts to samples of your actual writing on similar topics to identify divergent patterns. Vary paragraph lengths, swap default model transitions for your specific phrases, restructure sentences to break alternating short-long patterns, adjust tone to match content needs, and break the metronomic rhythm that marks default model voice.

What are first-hand experience markers?

Markers that signal genuine contact with the topic. Photographs of products being used, anecdotes with details only someone present would include, calibrated hedging that matches actual confidence levels, edge case engagement, changed positions with specifics about what triggered the change, and self-implication when the topic permits. The markers cannot be fabricated and have to come from the operator’s actual experience.

Can the editorial layer be skipped on simple articles?

The layer can be applied with less depth on shorter or simpler content, but the core dimensions cannot be skipped entirely. Even short articles need fact-checking, voice that does not signal AI fingerprint, and at least minimal experience marker presence. The depth scales with article length, but the presence of all four dimensions is required.

What happens if I skip the editorial layer?

The published content exhibits AI fingerprint patterns, accumulates inaccuracy from unverified claims, lacks the experience markers that the helpful content framework evaluates, and contributes to site-wide signals that trigger algorithmic suppression. The consequences aggregate over time as more unedited articles get published, and recovery requires either substantial editorial work on existing content or rebuilding the affected sections of the site.

Should AI editorial work be done by the same person who writes the prompt?

The same person bringing both pieces produces the most consistent results because the operator’s perspective stays consistent across the workflow. Teams can separate the roles when editorial capacity is the constraint, but the editor needs access to the operator’s actual perspective and experience for the layer to add the markers and calibration the layer requires.