AI Content vs Human Content: What Google Actually Cares About

AI Summary

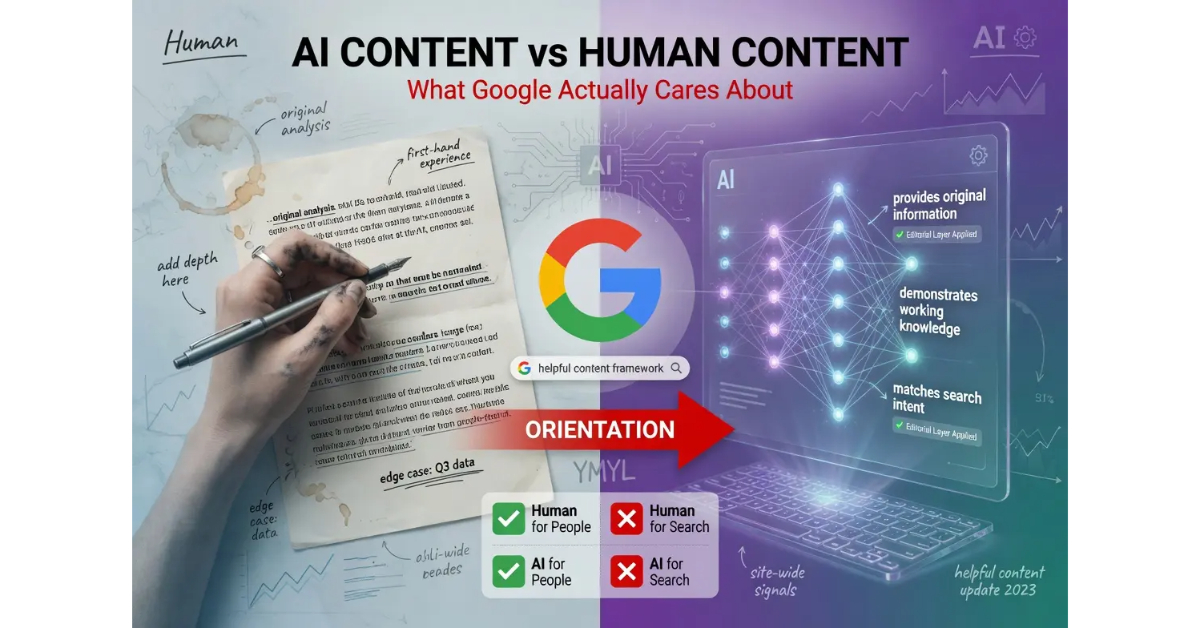

What does Google actually care about with AI content? Google does not evaluate content based on whether it was produced by AI or by humans. Google evaluates whether the content was created primarily for people or primarily for search engines, and the production method is irrelevant to that evaluation except insofar as it affects the underlying orientation.

What it is and who it is for: The AI versus human framing matters because the framing operators use shapes the configuration they produce. Operators who think the question is “did AI write it” optimize for hiding AI involvement. Operators who think the question is “does it serve readers” optimize for the dimension Google actually evaluates.

The rule: The AI versus human binary is a category error. The actual dimension is the orientation of the production process, which produces helpful content or unhelpful content regardless of who or what did the writing.

Table of Contents

Google’s Actual Position on AI Content

Google’s position on AI content has been remarkably consistent since the question first emerged in 2022, and the consistency has been ignored by most of the SEO industry because the consistent position is not as entertaining as the speculation. The position lives in Google’s February 2023 guidance and has been reinforced in every helpful content update since.

The guidance states directly that the appropriate use of AI for content production is not against Google’s guidelines, that automation has long been used to generate helpful content like sports scores and weather forecasts, and that the company’s focus has always been on rewarding high-quality content regardless of how it was produced. The framing is unambiguous. The production method is not the dimension being evaluated.

The dimension that is being evaluated is whether the content was created to help people or whether it was created primarily to manipulate search rankings. This is the same dimension the helpful content framework has evaluated since the framework was introduced in 2022, before the AI question became as prominent as it is now. The framework predates the AI debate and applies to AI without modification.

The shorthand version: Google does not penalize AI content for being AI-produced. Google penalizes content that was produced for the wrong reason, and AI-produced content fails that test more often than human-produced content because the production model rewards volume over substance unless operators build discipline against that pull.

For the broader context on how this position fits into AI content creation strategy, the Pillar guide on AI Content Creation covers the full framework. The sibling articles on How to Use AI to Write SEO Content, the AI Content Editorial Layer, and AI Content at Scale cover the other dimensions.

Why the AI vs Human Frame Is Wrong

The AI versus human framing is a category error because it asks the wrong question. The framing assumes the relevant variable is the species of the producer, when the relevant variable is the orientation of the production process. The two dimensions can vary independently, which means asking whether content is AI or human is asking the wrong question regardless of which answer it returns.

Consider the four configurations the actual variables produce. Configuration one is human content created for people. The configuration that has produced ranking content for as long as ranking has existed. Configuration two is human content created for search engines. Thin affiliate sites, exact-match domain pages, keyword-stuffed listicles, and the entire cottage industry of human-produced content that exists to rank rather than to serve readers. Configuration three is AI content created for people. AI-assisted drafts that operators bring substantive perspective to and apply the editorial layer to. Configuration four is AI content created for search engines. The volume play, mass-produced thin coverage, content that exists because AI made volume cheap.

The AI versus human framing collapses these four configurations into two, which loses the dimension that actually predicts ranking outcomes. Configurations one and three perform comparably because both are oriented toward serving readers. Configurations two and four perform comparably because both are oriented toward manipulating rankings. The orientation matters. The production method does not, except insofar as production method tends to correlate with orientation in ways that are visible in the data.

The reason production method correlates with orientation is straightforward. AI lowers the cost of producing volume, which makes the volume play more attractive economically. The lowered cost does not make the volume play work better against the helpful content framework, but it does make more operators try it. The result is that AI content as a population skews toward the volume play more than human content does, which produces correlation in the empirical data without producing causation in the framework.

Operators who understand the actual variables can produce AI content in configuration three, where the orientation is correct and the production method is irrelevant. Operators who think the variable is the production method optimize for hiding AI involvement, which addresses a dimension that is not actually being evaluated. The optimization is wasted effort. The orientation is what needs the operator’s attention.

The Helpful Content Framework

The helpful content framework is the operational definition of orientation that Google’s systems use to evaluate content. The framework asks a series of questions about the content and the site, and the answers determine whether the content registers as helpful or as something produced primarily for search engines. The questions break across several categories.

The first category is about the audience. Does the content provide original information or analysis. Does it provide substantial value compared to other pages in search results. Does it offer insight beyond the obvious. Does it make a point or take a position rather than aggregating other sources without adding to them. Sites that produce thin coverage of topics other sources cover better fail this category regardless of production method.

The second category is about expertise. Is the content produced by someone who actually has knowledge about the topic. Does the writing demonstrate working knowledge through engagement with edge cases, depth of explanation, and surfacing of details that only someone with experience would surface. Sites that produce surface-level coverage of topics they do not actually understand fail this category regardless of production method.

The third category is about user experience. Does the content match the search intent. Does it deliver what the page promises. Does the page lead readers to a satisfactory experience or does it leave them looking for a better source. Sites that produce content optimized for arrival from search rather than for user satisfaction fail this category regardless of production method.

The fourth category is about trustworthiness. Does the site provide clear authorship. Does the content cite reliable sources. Does the broader site infrastructure include the transparency, accuracy, and security signals that mark legitimate publishers. Sites that hide authorship, cite unreliable sources, or operate with thin transparency infrastructure fail this category regardless of production method.

The framework operates at the page level and aggregates at the site level. A site that consistently produces helpful content across its publishing develops site-wide signals that benefit even individual pages that may not perfectly match every dimension. A site that consistently produces unhelpful content develops site-wide signals that suppress even individual pages that may be substantively better than the aggregate. The aggregate signal is part of why the framework operates the way it does.

What the Empirical Data Shows

The empirical data on AI content performance is messier than the AI versus human framing suggests, and the mess is informative once it is parsed correctly. Operators sometimes cite data showing AI content underperforms, and the data is real, but the explanation is not what the surface presentation suggests.

The first finding in the data is that AI content underperforms on average. This is true. The average article in the AI content population performs worse than the average article in the human content population, and the gap is substantial across most measurement frames.

The second finding is that the underperformance is concentrated at the volume end of the AI content population. AI content produced at high volume per operator, on broad topical surfaces, with thin editorial layers, performs much worse than the AI content average. The concentration explains most of the aggregate underperformance.

The third finding is that AI content produced with editorial discipline performs comparably to human content produced with the same discipline. When the studies control for orientation rather than production method, the gap between AI and human content closes substantially or disappears entirely. This is the finding that maps onto the framework Google’s systems actually use.

The fourth finding is that human content also underperforms when produced without orientation discipline. Thin affiliate sites with human-produced content perform comparably to thin affiliate sites with AI-produced content. The dimension that distinguishes performers from non-performers is the orientation, which is the dimension the framework was designed to evaluate.

The implication for operators is that the data does not support the conclusion the AI versus human framing suggests. The data supports the conclusion that orientation matters and production method does not, which is exactly what Google’s published guidance has been saying since 2023. The empirical findings and the official guidance are aligned. The misreading of the data comes from operators who want the AI versus human binary to be the answer because the binary is easier to act on than the orientation question.

When Human Content Fails the Framework

Human content is not automatically helpful content. The assumption that human production produces ranking outcomes is empirically wrong, and naming the failure modes directly clarifies why production method is downstream of orientation.

The first pattern is the thin affiliate site. Human writers produce reviews, comparisons, and recommendations on products they have not actually tested, drawing from manufacturer marketing copy and competitor reviews to assemble content that exists primarily to rank for commercial intent queries. The content reads as human-produced because it is, but the orientation is wrong, and the framework treats it accordingly.

The second pattern is the SEO-first content shop. Agencies produce articles for clients on tight margins, with writers paid per piece and incentivized to produce volume rather than depth. The articles read as human-produced and follow the structural patterns the agency has determined produces rankings, but the orientation is the search engine rather than the reader, and the framework treats them accordingly.

The third pattern is the keyword-stuffed listicle. Human writers produce articles structured around target keywords, with subheadings, anchor links, and surrounding text optimized for ranking signals rather than for reader value. The pattern produces content that ranks for short periods before the framework catches up, and the framework catches up because the orientation is detectable regardless of who produced the writing.

The fourth pattern is the rewritten aggregation. Human writers take content from multiple sources and rewrite it to avoid duplicate content detection, producing articles that exist to capture search intent without adding original insight. The rewriting is human work, but the orientation is to extract value from the work others have done rather than to contribute new value, and the framework treats it accordingly.

The fifth pattern is the credentialed-but-empty bylines. Sites with named human authors who have credentials in the topic area but who produce content that does not actually demonstrate the knowledge the credentials suggest. The credential signal is real, but the content does not deliver on the signal’s promise, and the framework increasingly catches the gap between credentialed bylines and substantive content.

The pattern across all five is that human production does not save content from failing the framework when the orientation is wrong. The framework evaluates the orientation, and orientation failures appear regardless of who produced the writing.

When AI Content Succeeds at the Framework

The reverse case is also worth treating directly. AI content can and does succeed at the helpful content framework when the orientation is correct, and the patterns that produce success are predictable.

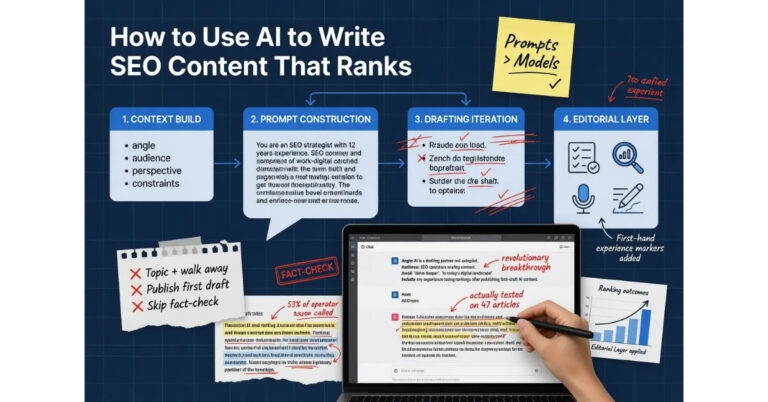

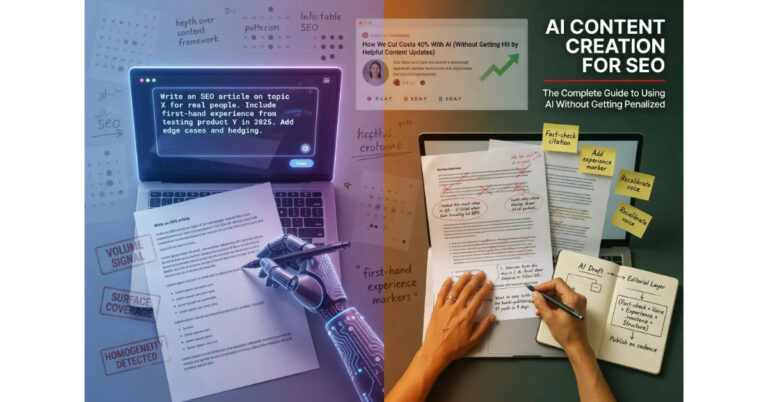

The first pattern is AI as drafting partner inside operator-led production. The operator brings substantive perspective, expertise, and editorial judgment. The AI produces drafts that the operator shapes into content that reflects the operator’s actual position. The output is substantively the operator’s work, with AI handling the drafting workload that the operator would otherwise carry. The orientation is correct because the operator drives the orientation, and the AI involvement is invisible to the framework because the framework does not evaluate production method.

The second pattern is AI for substantive long-form on focused topical clusters. Operators who use AI to produce deep treatment of specific subjects, with the editorial layer adding the operator’s experience markers and substantive depth, produce content that builds topic authority over time. The compounding effect of focused depth produces site-wide signals that benefit all the related content, including content the operator produces without AI assistance.

The third pattern is AI for topics where the operator has genuine expertise. Operators who use AI to draft content on subjects they actually know produce drafts that the operator can immediately recognize as accurate or inaccurate, voice-correct or voice-divergent. The expertise the operator brings to the editorial layer is what turns AI drafts into substantive content, and the expertise is the variable that makes the configuration work.

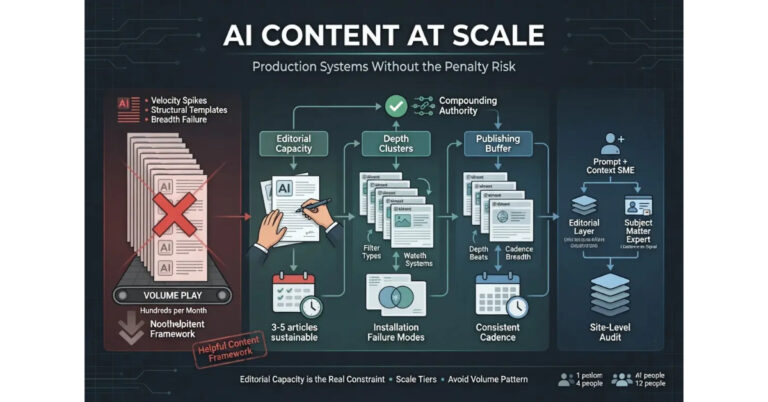

The fourth pattern is AI for production support inside teams with editorial capacity. Larger operations that use AI to scale draft production while maintaining editorial capacity at the human level produce content at higher throughput than pure-human production while maintaining the orientation the framework rewards. The editorial capacity is the constraint, and operations that scale editorial capacity alongside AI drafting produce sustainable scale.

The fifth pattern is AI for repetitive content categories where substantive variation is limited. Product descriptions, listing pages, certain types of news summaries, and similar content categories where the substance is largely structural can use AI more aggressively because the editorial layer requirements are lower. The framework still applies, but the bar for substantive depth on these categories is lower than on long-form analytical content.

The pattern across all five is that AI involvement is compatible with the orientation the framework evaluates. The orientation is what determines outcomes, and AI sits inside the production process without changing the orientation question.

The Detection Question Most Operators Ask

The question operators most often ask about AI content is whether Google can detect it. The question is asked frequently enough to deserve a direct answer, even though the answer matters less than the operators asking the question often realize.

The honest answer is that Google’s systems can identify patterns associated with mass-produced AI content, particularly when those patterns aggregate at the site level. The detection is not focused on individual articles in isolation. The systems look at the aggregate signals across a site, including publishing velocity, structural homogeneity, voice consistency, surface coverage versus depth, presence or absence of first-hand experience markers, and the overall fit between the site’s content and the audience the content claims to serve.

The patterns that mark mass-produced AI content are detectable because they are statistically distinct from how editorial operations actually work. Mature publishing sites do not produce hundreds of articles in compressed time windows. Mature publishing sites do not exhibit identical structural patterns across all their articles. Mature publishing sites do not consistently lack first-hand experience markers across their entire content base. The presence of these patterns at the site level is the signal the systems use, not the AI involvement on individual pieces.

The implication is that the detection question is the wrong question. The question that matters is whether the site exhibits the patterns that mark mass-produced AI content, regardless of whether AI was actually used. A site that produces volume, exhibits structural homogeneity, lacks experience markers, and operates at the surface coverage level fails the framework whether or not AI did the writing. A site that operates with editorial discipline, varied structure, experience markers, and substantive depth passes the framework whether or not AI assisted the production.

The detection question reveals what operators are actually asking. Operators who ask whether AI is detectable are usually asking whether they can take shortcuts that the framework specifically targets. The honest answer to that question is that the shortcuts are detectable because the shortcut patterns are detectable, not because AI involvement is detectable. The shortcuts are what the framework evaluates, and the shortcuts produce signals at the site level that the systems identify regardless of production method.

The operators who do not need to worry about detection are the ones who are not taking the shortcuts. The framework is not trying to catch AI involvement. The framework is trying to catch the orientation that produces unhelpful content, and operators who have the orientation right are not in the framework’s target set regardless of how they produce their content.

Verdict

The AI content versus human content debate gets the frame wrong. Google does not evaluate content based on the species of the producer. Google evaluates whether the content was created primarily for people or primarily for search engines, and the production method is irrelevant to that evaluation except insofar as it correlates with orientation in the empirical data.

The four configurations the actual variables produce are human-for-people, human-for-search, AI-for-people, and AI-for-search. The first and third perform comparably because both have correct orientation. The second and fourth perform comparably because both have wrong orientation. Operators who understand this can produce content in either of the two performant configurations. Operators who think the question is AI versus human optimize for the wrong dimension.

The empirical data on AI content underperformance is real but misread. The underperformance concentrates at the volume end of the AI population, where orientation is wrong rather than where production method is the issue. When studies control for orientation, the AI versus human gap closes or disappears, which is what Google’s published guidance has been saying since 2023. The data and the guidance agree.

The detection question reveals what operators are actually asking. The framework is not trying to catch AI involvement; it is trying to catch the orientation that produces unhelpful content. Operators who have orientation right are not in the framework’s target set regardless of production method.

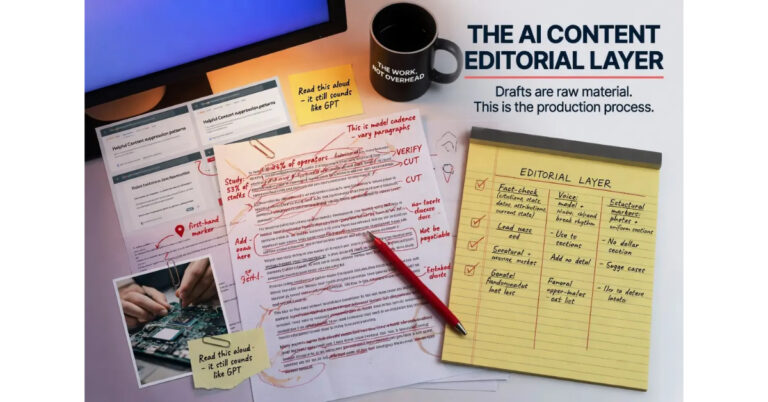

For the broader framework that ties this analysis together with the rest of the AI content creation discipline, the Pillar guide covers the full system. The sibling article on How to Use AI to Write SEO Content covers the workflow that produces ranking content. The article on the AI Content Editorial Layer covers the operational discipline that separates the performant configurations from the non-performant ones. The article on AI Content at Scale covers production systems that scale without sliding into the volume play that fails the framework.

Frequently Asked Questions

Does Google rank AI content lower than human content?

No. Google evaluates content based on whether it was created primarily for people or primarily for search engines, not based on production method. AI content with correct orientation performs comparably to human content with the same orientation. AI content with wrong orientation performs comparably to human content with wrong orientation. The orientation is what matters.

Why does AI content seem to underperform on average?

AI content underperforms on average because the AI content population skews toward the volume play that the helpful content framework specifically targets. The lowered cost of AI production has made the volume play more attractive economically, which produces correlation between AI involvement and wrong orientation in the empirical data without producing causation in the framework itself.

Can human-written content fail the helpful content framework?

Yes. Thin affiliate sites, SEO-first content shops, keyword-stuffed listicles, rewritten aggregation, and credentialed-but-empty bylines all fail the framework regardless of human authorship. The framework evaluates orientation, and orientation failures appear regardless of production method.

Does Google detect AI content?

Google’s systems identify patterns associated with mass-produced AI content, particularly at the site level. The detection focuses on aggregate signals like publishing velocity, structural homogeneity, voice consistency, surface coverage, and missing experience markers. Individual AI articles inside disciplined editorial workflows do not typically register as problematic on these signals.

What is the helpful content framework?

The helpful content framework is Google’s evaluation system that asks whether content provides original value, demonstrates expertise, matches search intent, and operates inside trustworthy site infrastructure. The framework applies to all content regardless of production method and evaluates orientation rather than authorship.

Should I disclose AI involvement in my content?

Google does not require disclosure of AI involvement. Other contexts may have separate disclosure requirements for other reasons, but those requirements are not driven by Google’s framework. The decision to disclose is independent of the question of whether the content will rank, which depends on orientation rather than disclosure.

Can AI content rank in YMYL niches?

YMYL niches require elevated Trust signals across all dimensions, and the bar for substantive depth and demonstrated expertise is higher than in general topics. AI content can rank in YMYL niches when the editorial layer is substantial enough to meet the elevated bar, which typically requires operators with genuine expertise in the topic and editorial workflows that surface that expertise in the published content.

What is the right way to think about AI versus human content?

The question is the wrong frame. The variables that matter are orientation (created for people or for search engines) and substantive quality (demonstrates expertise or surface coverage), not production method. Operators who understand this can produce content in either AI-for-people or human-for-people configurations and achieve comparable ranking outcomes.