How to Use AI to Write SEO Content That Ranks

AI Summary

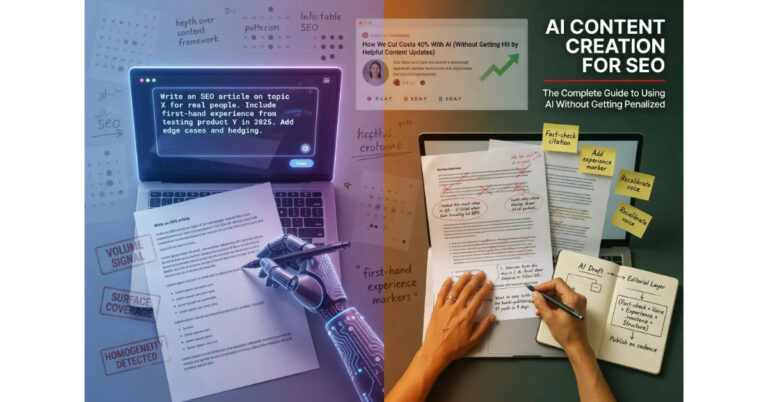

What is the AI workflow for SEO content? The workflow uses AI as a drafting partner inside a disciplined editorial process, with the operator providing substantive context up front and applying the editorial layer that turns drafts into publishable articles.

What it is and who it is for: The workflow matters for any operator using AI to produce content at meaningful volume without triggering the suppression patterns that mark mass-produced AI output. The same workflow applies whether the operator is producing one article per week or scaling to a team-level operation.

The rule: Prompts matter more than models. The biggest performance gap in AI content is not between which model the operator uses but between operators who give the model substantive context and operators who give it a topic and walk away.

Table of Contents

The Workflow at a Glance

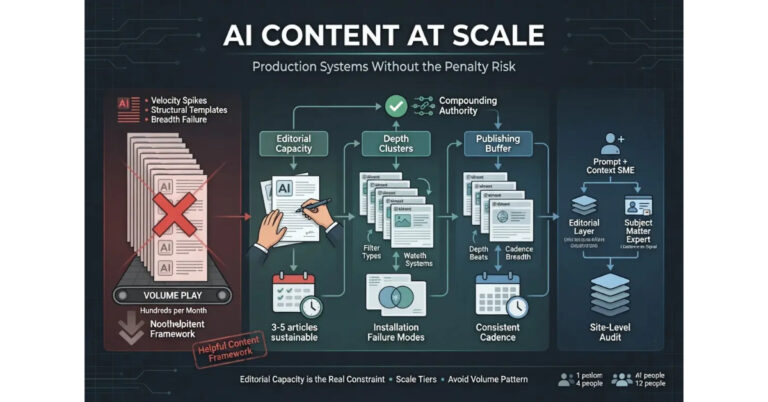

The AI content workflow that produces ranking outcomes is more disciplined than most operators expect when they first start using AI for content production. The discipline is exactly what separates the workflow from the autopilot approach that produces failure mode content. The workflow has four stages, and skipping any of them produces predictable degradation in the output.

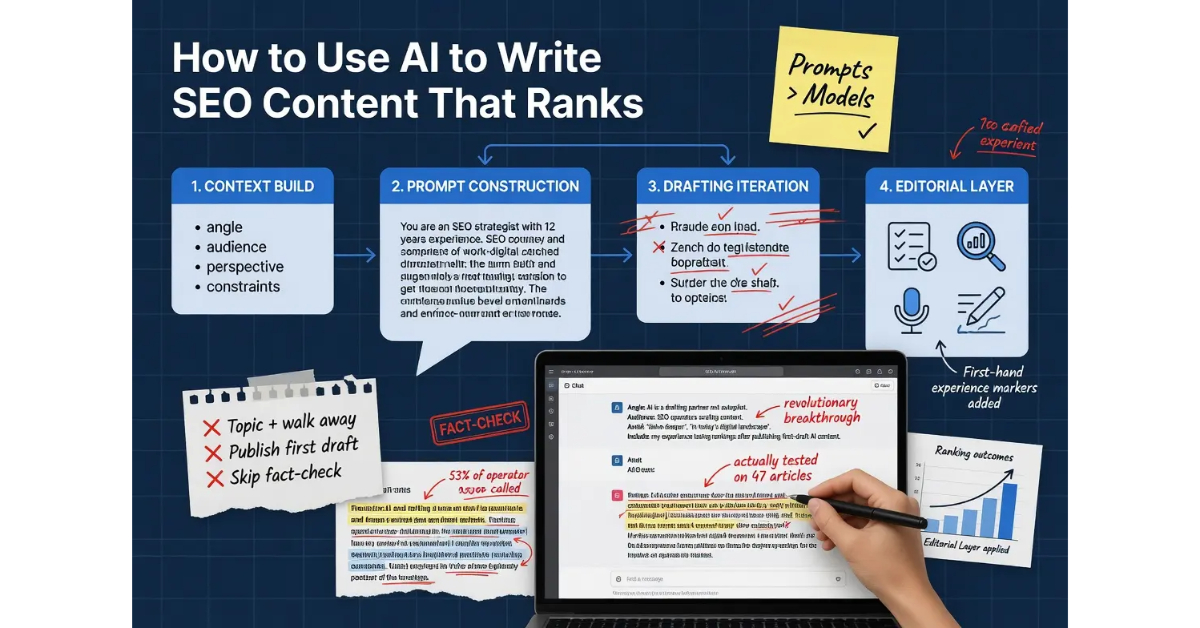

Stage one is the context build. The operator assembles the substantive material the article needs to draw on, including the angle the piece is taking, the audience the content serves, the constraints the writing has to honor, and the operator’s actual perspective on the topic. The context build happens before any prompting, and the depth of the context build determines the ceiling on what the rest of the workflow can produce.

Stage two is the prompt construction. The operator writes the prompt that conveys the context to the model along with the specific request for what the model should produce. The prompt is not a topic. It is a structured brief that gives the model enough information to produce a draft that reflects the operator’s perspective rather than a generic treatment of the topic.

Stage three is the drafting iteration. The model produces a draft. The operator reviews the draft. The operator iterates on sections that did not come out right, either by editing directly or by prompting again with more specific guidance. The iteration is the work most operators skip, and the skip is what produces the AI-fingerprint output that fails to rank.

Stage four is the editorial layer. The operator applies fact-checking, voice calibration, structural editing, and the addition of first-hand experience markers. The editorial layer is covered in detail in the tier article on the AI Content Editorial Layer, and the layer is non-negotiable for content that needs to rank in competitive contexts.

The shorthand version: AI is one tool inside a four-stage workflow. The other three stages are where the work that determines ranking outcomes actually happens.

For the broader context on how this workflow fits into AI content creation strategy, the Pillar guide on AI Content Creation covers the full framework. The sibling articles on AI Content vs Human Content, the AI Content Editorial Layer, and AI Content at Scale cover the other dimensions.

Prompt Structure: The Context Layer

The prompt structure is the single most consequential variable in AI content production, and the variable most operators underinvest in. Operators who treat the prompt as a topic statement get topic-treatment output. Operators who treat the prompt as a structured brief get drafts that reflect the substantive context the brief provided.

The structured brief includes several components that work together. The first component is the angle. What is the actual position the piece is taking on the topic. Most articles on most topics take roughly the same position because most writers default to the consensus position, and the result is content that reads interchangeably with every other article in the SERP. A prompt that specifies the angle deliberately produces drafts that take a position the operator can stand behind rather than the default consensus the model would otherwise generate.

The second component is the audience. Who is the article actually for. A piece on link building reads differently when written for SEO operators with five years of experience versus founders evaluating whether to hire an agency. The audience determines the assumed knowledge, the appropriate vocabulary, the depth of explanation that serves the reader, and the structural choices that match how the audience actually reads. Prompts that specify the audience produce drafts calibrated to that audience.

The third component is the operator’s perspective. What does the operator actually think about the topic, including the specific takes that distinguish their view from the generic field. The model cannot know the operator’s perspective unless the operator surfaces it in the prompt, and prompts that include the perspective produce drafts that reflect it. Prompts that omit the perspective produce drafts that reflect the model’s default treatment, which is the consensus view.

The fourth component is the constraints. What patterns should the writing avoid. Specific words the operator never uses. Structural moves that read as generic. Phrasings that mark the writing as AI-produced. Operators who specify the constraints up front produce drafts that already conform to the operator’s voice rather than requiring extensive rewriting in the editorial layer.

The fifth component is the structural request. What does the operator actually want the model to produce. A long-form pillar article. A short tactical piece. A specific section of a larger article that the operator will assemble. The structural request shapes how the model approaches the draft, and unclear requests produce drafts that combine the worst features of multiple structures.

The honest read on prompt structure is that operators who spend ten minutes writing a substantive prompt produce dramatically better drafts than operators who spend thirty seconds typing a topic. The time investment in the prompt pays back across the rest of the workflow because better drafts require less editorial work to bring to publishable.

The Drafting Stage

The drafting stage is where the model produces output based on the prompt, and where most operators make the second consequential mistake of assuming the first draft is the finished product. The first draft is the starting point. Operators who treat it as anything else produce content that reads as AI-produced because it is.

The pattern that works is to read the first draft as a critic rather than as a publisher. The reading identifies sections where the model defaulted to generic framing, sections where the prompt did not give the model enough context to produce substantive output, sections where the model fabricated claims that need verification, and sections where the structure does not match what the article needs to do.

The iteration that follows breaks across two patterns. The first pattern is direct editing. The operator rewrites passages that need different framing, restructures sections that came out wrong, and cuts material that does not serve the article. Direct editing is faster than re-prompting for sections where the operator knows exactly what should be there, and it produces output that reflects the operator’s voice from the start.

The second pattern is targeted re-prompting. The operator returns to the model with specific guidance about what should change, often with the existing section pasted in for reference. Targeted re-prompting works for sections where the operator wants the model to take another pass with new context, particularly for sections that require substantive elaboration the operator does not have time to write directly.

The mistake to avoid in the drafting stage is asking the model to “improve” or “rewrite” an existing draft without specific guidance. The model cannot improve text without knowing what direction the improvement should go, and generic improvement requests produce changes that move the text in arbitrary directions. The improvement might land in the right place, or it might move further from what the article needs. Specific guidance produces specific changes; generic guidance produces unpredictable ones.

Where the Editorial Layer Lives

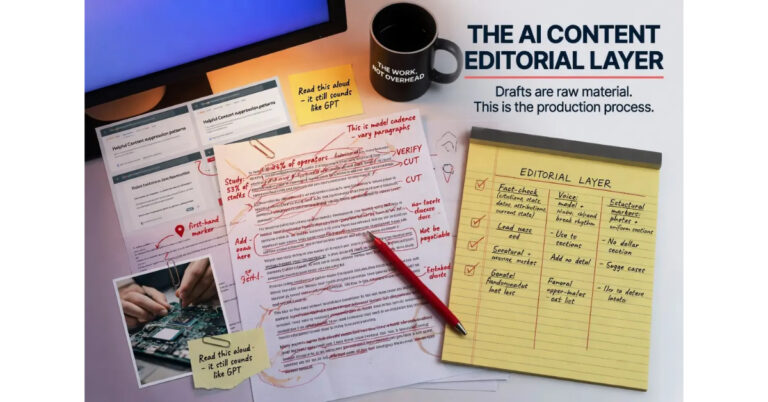

The editorial layer is the discipline that turns drafts into publishable content, and the layer where the operator’s actual judgment determines the article’s quality. The layer has four dimensions that all need to be applied for content that ranks in competitive contexts.

The first dimension is fact-checking, which is covered in detail later in this article. Models confidently generate claims at confidence levels that are uncorrelated with accuracy, and operators who skip fact-checking publish inaccuracy at scale.

The second dimension is voice calibration, which is covered in the next section. Models default to recognizable patterns that mark the output as AI-produced, and the calibration breaks those patterns to match the operator’s actual writing.

The third dimension is structural editing. The operator restructures the draft to match how the operator would have organized the piece if writing without AI assistance. This usually involves cuts of generic introductions, reorganization of sections that came out in suboptimal order, and the addition of structural moves the model would not have generated.

The fourth dimension is the addition of first-hand experience markers. Models cannot produce authentic experience markers because models do not have first-hand experience with the topics they write about. The operator adds these markers from their actual contact with the subject. The markers include photographs of products being tested, anecdotes that include details only someone present would include, hedging that reflects the operator’s actual confidence rather than the confidence the writing position implies, and engagement with edge cases the model would not have surfaced.

The deeper operational treatment of all four dimensions lives in the tier article on the AI Content Editorial Layer. This section covers the layer at the workflow level. The editorial article covers the layer at the operational level.

Voice Calibration

Voice calibration is the dimension of the editorial layer where most AI content fails most visibly, and the dimension operators who do not understand it tend to dismiss as cosmetic. The calibration is not cosmetic. The voice patterns that mark AI output are exactly the patterns Google’s systems and human readers both use to identify content that was produced rather than written.

The default model voice has several recognizable features. The cadence falls into predictable patterns where every paragraph is roughly the same length. The vocabulary leans on a small set of transition phrases that appear repeatedly. The sentence structure alternates between short and long in ways that read smooth on first pass and templated on second. The tone is uniformly even regardless of what the content is about. The rhythm is metronomic where natural writing varies.

The calibration work breaks these patterns. The operator varies paragraph lengths in ways that match how the operator actually writes. The operator swaps default transitions for the specific phrases the operator uses. The operator restructures sentences to break the alternating pattern, including occasional fragments and occasional longer constructions that the model would not have generated. The operator adjusts tone where the content calls for stronger or softer treatment than the default even tone provides. The operator breaks the metronomic rhythm by making deliberate stylistic choices that produce variation.

The way to calibrate voice efficiently is to read the draft aloud. Sections that read as the operator’s actual voice can stand. Sections that read as generic AI output need rewriting. The aloud reading catches the cadence problems that silent reading misses, because the rhythmic patterns become audible in ways they are not visible on the page.

The other technique is to compare the draft to a sample of the operator’s actual writing on a similar topic. The comparison surfaces the specific patterns that diverge between the operator’s voice and the model’s default, and the patterns identified in the comparison tell the operator what needs to change in the draft.

The honest read on voice calibration is that it takes substantial time the first few times an operator does it, and the time decreases as the operator builds the muscle for the work. Operators who put the time in early build a calibration discipline that scales. Operators who skip the work produce content that signals AI fingerprint to anyone who reads more than two articles from the site.

For more on the broader content production discipline that supports voice calibration at scale, the Content discipline covers the operational architecture.

Fact-Checking Discipline

Fact-checking is the dimension of the editorial layer where the consequences of skipping the work are most severe and the work itself is least negotiable. Models confidently generate claims that range from accurate to fabricated, and the confidence in the writing is uncorrelated with the accuracy of the underlying facts. Operators who publish AI drafts without fact-checking accumulate inaccuracy across their content base, and the inaccuracy degrades the site’s Trust signal over time.

The categories of model errors break across several patterns. The first category is the fabricated citation. Models will reference studies, papers, statistics, and authorities that do not exist or that the model has misattributed. The fabrication reads plausible because the model knows what citations should look like, and operators who do not verify accept the citations as real. The fix is to verify every citation against the actual source before publishing. If the source does not exist, the citation gets cut.

The second category is the wrong number. Models generate specific statistics with confidence even when the actual numbers are different from what the model produced. A claim that “fifty-three percent of operators report” something is fabricated if no actual study reported that number. Operators who treat the model’s specific numbers as accurate publish wrong numbers. The fix is to verify every specific statistic against an actual source, or to remove the specificity and replace it with general framing the operator can support.

The third category is the wrong attribution. Models will attribute quotes, frameworks, or positions to people who did not say or hold them. The attribution reads plausible because the model knows the person exists and writes about adjacent topics, but the specific attribution is wrong. The fix is to verify every attribution against the actual source where the attributed material appeared.

The fourth category is the wrong date. Models confidently generate specific dates for events, product releases, policy changes, and developments, and the dates are sometimes wrong. The fix is to verify every specific date against authoritative sources.

The fifth category is the outdated information. Models trained on data with a knowledge cutoff produce confident statements about current state that may have changed since the cutoff. The fix is to verify time-sensitive claims against current sources, particularly for topics like Google’s policies, platform features, and industry developments where the state changes frequently.

The fact-checking workflow that scales is to keep a running list of every specific claim the article makes that requires verification, work through the list before publishing, and either verify the claim or cut it. The discipline is not negotiable for content that needs to maintain Trust signal over time.

What Not to Do

The patterns that produce failure mode AI content are predictable, and naming them directly is the most efficient way to help operators avoid them. The patterns break across several categories that compound when they appear together.

The first pattern is the topic-statement prompt. Operators who type a topic and walk away get topic-treatment output. The fix is the structured prompt covered earlier in this article.

The second pattern is the publish-the-first-draft workflow. Operators who skip iteration and editorial layer publish AI fingerprint at scale. The fix is the four-stage workflow covered throughout this article.

The third pattern is the fact-checking skip. Operators who trust the model’s confidence as a proxy for accuracy publish inaccuracy. The fix is the fact-checking discipline covered in the previous section.

The fourth pattern is the volume optimization. Operators who use AI to maximize article count rather than maximize quality per article produce content patterns that match what the helpful content guidance specifically targets. The fix is the depth approach covered in the tier article on AI Content at Scale.

The fifth pattern is the voice neglect. Operators who publish in the model’s default voice signal AI fingerprint to readers and to algorithmic detection. The fix is the voice calibration discipline covered earlier in this article.

The sixth pattern is the structural homogeneity. Operators who use the same prompt template across many articles produce content that exhibits the same structural patterns across the site. The fix is to vary prompt structure deliberately and to apply structural editing in the editorial layer.

The seventh pattern is the credentialing gap. Operators who publish AI-assisted content under generic byline accounts without surfaced author bios signal that the production layer is not connected to any human expertise. The fix is to surface real authorship for content the operator stands behind.

Verdict

The AI workflow for SEO content has four stages: context build, prompt construction, drafting iteration, and editorial layer. All four stages are necessary, and skipping any of them produces predictable degradation in the output. Operators who treat AI as autopilot get autopilot results. Operators who treat AI as one tool inside a disciplined workflow produce content that performs comparably to human content with the same discipline.

Prompts matter more than models. The biggest performance gap in AI content production is not between which model the operator uses but between operators who give the model substantive context and operators who give it a topic statement. Time invested in the prompt pays back across the rest of the workflow because better drafts require less editorial work.

The editorial layer has four dimensions: fact-checking, voice calibration, structural editing, and the addition of first-hand experience markers. All four dimensions are non-negotiable for content that needs to rank in competitive contexts. The deeper operational treatment of the editorial layer lives in the sibling article that focuses on it specifically.

The failure modes that produce AI fingerprint output are predictable. Topic-statement prompts. Publish-the-first-draft workflows. Fact-checking skips. Volume optimization. Voice neglect. Structural homogeneity. Credentialing gaps. Operators who avoid these patterns produce content that ranks. Operators who exhibit them produce content that signals what it actually is to anyone who reads more than two articles from the site.

For the broader framework that ties this workflow together with the rest of the AI content creation discipline, the Pillar guide covers the full system. The sibling article on AI Content vs Human Content covers what Google actually evaluates and why the AI versus human binary is the wrong frame. The article on the AI Content Editorial Layer covers the operational depth of the editorial discipline. The article on AI Content at Scale covers production systems that work without triggering the suppression patterns this article’s workflow is designed to avoid.

Frequently Asked Questions

What is the best AI model for writing SEO content?

Model choice matters less than prompt structure. The biggest performance gap in AI content is between operators who give the model substantive context and operators who give it a topic statement, not between which specific model the operator uses. Most current frontier models produce drafts of comparable quality when given comparable prompts.

How long should an AI prompt be?

Long enough to convey the angle, the audience, the operator’s perspective, the constraints, and the structural request. For long-form content, prompts typically run several hundred words because the substantive context required to produce a good draft cannot fit in a topic statement. The time investment in the prompt pays back in reduced editorial work later.

Should I edit AI drafts or re-prompt?

Both. Direct editing works for sections where the operator knows exactly what should be there. Targeted re-prompting works for sections where the operator wants the model to take another pass with new context. Generic “improve this” prompts do not work because the model cannot improve text without knowing what direction the improvement should go.

How long does the AI content workflow take?

The workflow takes roughly the same time as writing without AI for operators who do the editorial layer correctly. The drafting time decreases. The editorial time increases proportionally. Operators who skip the editorial layer save time on individual articles and lose the savings to suppression that affects the entire site.

Can AI write a complete article without human editing?

Technically yes, in the sense that AI can produce text that reads like a complete article. The output rarely ranks in competitive contexts because it lacks the substantive depth, voice authenticity, and first-hand experience markers that competitive ranking requires. Pure AI output works for low-competition contexts where the bar is low. It does not work where the bar is meaningful.

How do I avoid AI content sounding like AI?

The voice calibration discipline. Read drafts aloud to catch cadence problems. Compare drafts to samples of the operator’s actual writing to identify divergent patterns. Vary paragraph lengths, swap default transitions, restructure sentences to break alternating patterns, and break the metronomic rhythm that marks default model voice.

How important is fact-checking AI drafts?

Non-negotiable. Models confidently generate fabricated citations, wrong numbers, wrong attributions, wrong dates, and outdated information. The confidence in the writing is uncorrelated with accuracy. Operators who skip fact-checking publish inaccuracy at scale and degrade the site’s Trust signal over time.

Can I use AI for SEO content if I am not an expert in the topic?

The honest answer is that AI does not solve the expertise gap. The operator brings the expertise that gets surfaced in the prompt and added in the editorial layer. Operators without expertise produce content that lacks substantive depth regardless of AI involvement, because the depth comes from the operator rather than from the model. AI is a productivity tool for operators who already have the expertise, not a substitute for the expertise.