How Google Ranks Search Results: Signals, Calculation, and Position

AI Summary

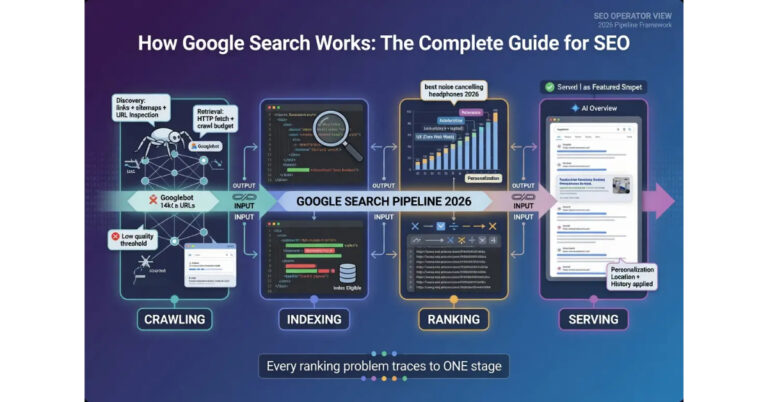

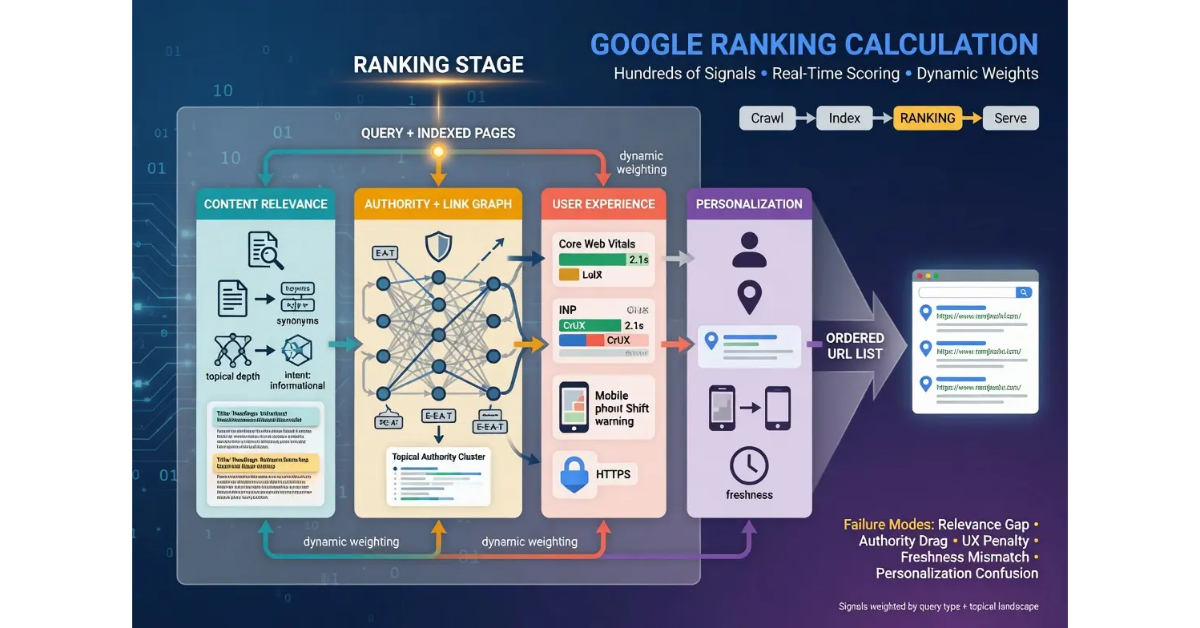

What is the ranking stage? Ranking is the real-time calculation that scores indexed pages against a user’s query and produces an ordered list of URLs to display. The calculation runs fresh for every query, drawing on hundreds of signals weighted dynamically based on query type, topical landscape, and ongoing algorithm updates. The output of the ranking stage is what the serving stage transforms into the visible search results page.

What it is and who it is for: The ranking stage matters for any operator who has indexed pages that need to compete for search visibility. The stage is the third of four in the Google Search pipeline, and pages that fail at ranking will not appear in search results regardless of how strong their crawl and indexing signals were upstream.

The rule: Ranking signals break across content relevance, authority, user experience, and personalization. Operators who optimize for the wrong signal category produce no movement. Operators who match optimization to the actual ranking gap move positions reliably.

Table of Contents

Content Relevance Signals

Content relevance is the first major signal category that drives ranking. The evaluation measures how well an indexed page’s content matches the user’s query, and the calculation goes beyond simple keyword matching into semantic understanding of what the query is actually asking and which content best answers it. Operators who think of relevance as keyword density miss the dimensions that actually drive the relevance score.

The system considers term frequency at the surface level. Pages that mention the query terms more frequently in meaningful positions tend to score higher than pages that mention them less. The mechanism is not pure keyword counting; the system weights mentions in titles, headings, and opening paragraphs more heavily than mentions buried in late-page content. Operators who structure pages with clear topical signals in the high-weight positions produce stronger relevance scoring than operators who bury the topic in walls of text.

Semantic relevance operates above the term frequency layer. The system understands that synonyms, related concepts, and topical neighbors all contribute to relevance even when the exact query terms do not appear. A page about “AI memory architecture” can rank for queries about “persistent context for LLMs” because the semantic relationship between the concepts is recognized. The semantic layer is what makes thin keyword-stuffed content underperform substantive content even when the keyword-stuffed page has higher term frequency.

Topical coverage is the third dimension. Pages that comprehensively address the topic with substantive depth score higher than pages that touch the topic shallowly. The system evaluates what users searching the query would expect to find and whether the page delivers it. The evaluation is not about word count for its own sake. It is about whether the page meaningfully answers the question, addresses related sub-questions, and provides the depth that someone searching the topic would actually need.

Query intent classification happens at the relevance layer. The system categorizes queries into intent types: informational queries seeking knowledge, navigational queries seeking a specific site, transactional queries seeking to complete an action, and commercial investigation queries seeking to evaluate options. Each intent type favors different content patterns. Informational queries favor comprehensive explanatory content. Transactional queries favor pages with clear action paths. Commercial investigation queries favor comparison content with evaluation frameworks. Operators who match content type to query intent produce stronger relevance signals than operators who produce generic content regardless of intent.

The relationship between the page’s content and other indexed content on the same topic is itself a signal. The system considers whether the page adds something the existing index does not have, whether it duplicates content already well-covered, and whether the perspective offered is differentiated enough to warrant ranking position. Pages that say what every other indexed page already says have a harder time ranking than pages that bring substantive original perspective to the topic.

The honest read on content relevance is that the layer rewards substantive depth and original perspective in ways that thin or derivative content cannot match. Operators who produce content that comprehensively addresses topics with authentic expertise produce stronger relevance signals than operators who produce content that paraphrases what is already in the index. The signal is what produces the documented gap between volume content operations and depth content operations over multi-year horizons.

For more on the upstream stages that deliver content to ranking, the tier article on How Google Crawls the Web and the tier article on How Google Indexes Pages cover the crawl and indexing functions. The pillar guide on How Google Search Works covers the four-stage pipeline. The sibling article on How Google Renders the SERP covers the downstream stage.

Authority Signals and the Link Graph

Authority signals form the second major category in ranking calculation. The signals measure the credibility of a page and the domain it sits on, drawing on the link graph, the publishing domain’s history, the topical authority the domain has accumulated, and the credibility markers present on the page itself. Authority is what allows otherwise comparable pages to outrank each other based on which one Google considers more trustworthy.

The link graph contribution is what most operators think of as authority, but it is one input among several rather than the dominant factor most operators assume. Google evaluates the inbound link profile of a page and a domain, considering both the quantity and quality of links. High-quality links from authoritative sources carry more weight than larger volumes of links from low-quality sources. The link graph signal also considers the topical relevance of the linking source. Links from sites topically aligned with the page’s subject contribute more than links from topically unrelated sites of comparable authority.

Domain authority and topical authority operate as related but distinct signals. Domain authority is the broad credibility signal that applies to the domain across all topics. Topical authority is the specific credibility signal for the domain’s expertise in particular subject areas. A high domain authority site without topical authority for a specific query may underperform a lower domain authority site with strong topical authority on that exact topic. The interaction is one of the dimensions that makes ranking calculation more nuanced than the simple “high DA wins” model some operators carry.

Topical authority builds through cumulative content production within a topical cluster. Sites that produce substantive depth on focused topical areas accumulate authority that lets specific pages on those topics rank in competitive landscapes where less-specialized higher-authority domains struggle. The mechanism is what makes topical clustering and content depth strategies effective ranking interventions even on smaller domains.

Page-level authority signals include the credibility of the specific page beyond its domain context. Author identification, credential signals, citation patterns, and references to authoritative sources all contribute. Pages with named authors who have established credentials in the topic area carry stronger authority signals than pages published under generic admin accounts. The author authority signal is part of what produces the documented benefit of E-E-A-T discipline in ranking outcomes.

Domain history matters at scale. Sites with consistent quality production over multi-year horizons accumulate authority that recently-launched sites cannot match regardless of content quality. The signal is what makes new domains face uphill battles in competitive topical areas even when the content is excellent. The compounding effect favors operators who commit to topical focus over years rather than rotating between topics opportunistically.

Authority signals interact with the other ranking signals dynamically. A page with strong content relevance but weak authority may still rank in less competitive contexts where authority is not the binding constraint. A page with strong authority but weak relevance may rank for general queries but lose to more relevant pages on specific queries. The ranking calculation weights the signals based on the query type and competitive landscape, which means operators have to identify which signal is the binding constraint for their target queries before designing interventions.

User Experience and Core Web Vitals

User experience signals form the third major category in ranking calculation. The signals measure how usable the page is for searchers who land on it, including page speed, mobile usability, layout stability, intrusive interstitial behavior, and the broader Core Web Vitals framework. The signals do not directly determine ranking on their own but contribute to the overall score and can act as tiebreakers when other signals are roughly equivalent.

Core Web Vitals is the structured framework Google uses to measure user experience at the technical level. The framework includes Largest Contentful Paint (LCP) measuring how quickly the main content becomes visible, Interaction to Next Paint (INP) measuring how quickly the page responds to user interactions, and Cumulative Layout Shift (CLS) measuring how stable the layout is during loading. Each metric has good, needs improvement, and poor thresholds, and pages need to score in the good range on the 75th percentile of real user experiences to pass.

The Core Web Vitals data Google uses comes from the Chrome User Experience Report (CrUX), which aggregates real user metrics from actual page visits rather than lab-simulated tests. The distinction matters because pages can perform well in lab tests but fail CrUX measurements when real-world conditions like network variability and device diversity produce different results. Operators who optimize purely against lab tools without checking CrUX data sometimes fail to move the actual ranking signal.

Mobile usability is a separate but related signal. Since the mobile-first indexing transition, Google evaluates the mobile version of pages as the primary version for ranking. Pages that render well on desktop but produce usability issues on mobile face ranking penalties even when the desktop experience is strong. The signal includes touch target sizing, viewport configuration, font readability at mobile sizes, and content reflow behavior on small screens.

Page speed beyond Core Web Vitals contributes through general performance signals. Time to First Byte (TTFB), total page weight, render-blocking resource counts, and overall load completion time all affect the user experience evaluation. The signals interact with crawl budget at the upstream stage because faster pages allow Googlebot to crawl more efficiently, but they also affect ranking directly through the user experience layer.

Intrusive interstitials and layout disruptions degrade the user experience signal. Pages that show full-screen ads on load, force email signups before content access, or push the main content far below the fold with promotional content produce signals that reduce ranking position. The mechanism is what produces the documented ranking penalty for overly aggressive monetization patterns.

HTTPS implementation is a baseline user experience signal. Sites that serve content over HTTP without encryption have been at a ranking disadvantage for years, and the signal has strengthened over time. The signal is now more accurately characterized as a baseline requirement rather than a positive ranking factor; sites without HTTPS are penalized rather than sites with HTTPS being rewarded.

The honest read on user experience signals is that they are necessary but not sufficient for competitive ranking. Pages with poor Core Web Vitals lose ranking position even when other signals are strong. Pages with excellent Core Web Vitals do not automatically rank well; they just remove a barrier to ranking. Operators who treat user experience as a sufficient ranking strategy by itself are usually disappointed. Operators who treat it as a foundation that supports the other ranking signals produce the multiplied effect across the full signal stack.

Personalization and Query Context

Personalization is the layer that adjusts the ranking calculation based on the specific searcher and the specific query context. The layer applies to the otherwise generic ranking calculation to produce the actual ordered results an individual user sees. Operators who do not understand personalization sometimes confuse personalized ranking variations with genuine ranking changes, which produces inconsistent diagnostic conclusions.

Location signals are the dominant personalization input. The system considers the searcher’s geographic location and adjusts results based on geographic relevance to the query. Local intent queries like “coffee shop” or “plumber” receive heavy location personalization that surfaces nearby businesses regardless of broader rankings. Even non-local queries receive subtler location adjustments based on regional search patterns, language variants, and content relevance to the searcher’s region.

Search history can adjust results based on previously visited domains, topics the searcher has shown interest in, and patterns from the searcher’s broader query history. The mechanism is most visible when the same query produces noticeably different results for two users with different search histories. Personalization based on history is more conservative than location personalization and produces smaller adjustments rather than entirely different result sets.

Device type affects the calculation when results vary in usefulness across desktop and mobile contexts. Mobile searches tend to favor results with strong mobile experiences, results from local businesses for location-relevant queries, and results that work well in mobile-first interaction patterns. Desktop searches have different surface signals and different result preferences, especially for queries where desktop tools or detailed comparison content is more useful than mobile-friendly summaries.

Query context includes the broader signal patterns the system reads from query construction. Long-tail queries with specific phrasing produce different ranking weights than short-tail queries with general phrasing. Question-format queries trigger different ranking patterns than statement-format queries. Queries with explicit modifiers like “near me” or “in 2026” or “for beginners” produce specific ranking adjustments that match the modifier intent.

The temporal dimension affects ranking through freshness signals. Some queries favor recent content because the topic is time-sensitive. News queries, current event queries, and rapidly evolving topical areas weight freshness heavily. Other queries favor evergreen content because the topic is stable and recent surface-level coverage may be less authoritative than established comprehensive references. The system classifies queries by their freshness sensitivity and weights ranking signals accordingly.

The personalization layer means that two searchers entering the same query at the same moment can see different ranked results, which is why ranking position checks should use neutral browsers, location-spoofed sessions, or specialized rank-tracking tools rather than personalized searches. Operators who check rankings through their own browsers without controlling for personalization often see their own pages ranking higher than they actually rank for typical users, which produces overly optimistic ranking conclusions.

Where Ranking Actually Fails

Ranking failures break across recognizable patterns, and operators who can identify the specific failure mode can apply the targeted intervention. The diagnosis matters because pages that are not ranking well often look superficially similar across different failure types, but the fixes differ depending on which signal is actually weak.

The first failure pattern is insufficient relevance signals. The page is indexed but does not score high enough on the relevance dimension for its target queries. The pattern shows up as pages that rank for tangentially related queries but not the queries the operator wants. The root causes include content that does not address the target query directly, missing semantic depth, and structural issues that bury the topical signal in low-weight page positions. The fix is content-side: rewrite the page to address the target query more directly, add semantic depth around the topic, and surface the topical signal in high-weight positions like the title, headings, and opening paragraphs.

The second failure pattern is insufficient authority signals. The page has strong relevance but gets outranked by higher-authority competitors. The pattern shows up as pages that rank in the 6-15 range for their target queries while higher-authority pages dominate the top 5. The root causes include weak link profile, low topical authority for the specific subject, and absence of credibility markers like author identification. The fix is authority-side: build links from topically relevant authoritative sources, develop topical depth across the cluster, and add the author and credibility infrastructure that the page lacks.

The third failure pattern is the topical authority gap. The domain ranks well for some topics but cannot break through on others despite strong individual page signals. The pattern shows up as inconsistent ranking performance across the site, with some clusters performing well and others flatlining. The root causes include shallow content coverage in the underperforming clusters, mixed topical signals that confuse Google about what the domain is actually authoritative on, and competitive topical landscapes where established competitors have years of accumulated authority. The fix is topical focus: invest in cluster depth on the underperforming topics, prune content that dilutes topical signals, and accept that breaking into established topical landscapes takes sustained commitment.

The fourth failure pattern is user experience drag. The page has strong relevance and authority but fails to rank because user experience signals are weak. The pattern shows up as pages that should rank well based on content and authority signals but consistently underperform expectations. The root causes include poor Core Web Vitals scores, mobile usability issues, intrusive interstitials, or slow server response times. The fix is technical: address the specific Core Web Vitals metrics that fail, audit mobile experience through mobile-specific testing, remove interstitial patterns, and optimize server performance.

The fifth failure pattern is the personalization confusion. The page actually ranks well for typical users but the operator’s personalized search results show different positions. The pattern shows up when operators check rankings from their own browsers and see results that do not match what their target audience sees. The root cause is failing to control for personalization signals during ranking checks. The fix is to use neutral ranking tools, location-spoofed sessions, or incognito browsing with specific location settings to get representative ranking data.

The sixth failure pattern is the freshness mismatch. The page is comprehensive and authoritative but the query type favors recent content that the page does not provide. The pattern shows up on time-sensitive queries where the page ranks below newer content despite stronger authority signals. The fix is content freshness: update the page with recent information, refresh the publication date when substantial updates are made, and ensure the page reflects the current state of the topic rather than the state at original publication.

The honest read on ranking failures is that the diagnosis determines the intervention. Operators who confuse relevance failures with authority failures spend effort on the wrong layer. Operators who confuse user experience drag with content quality issues produce no improvement because they are improving a different signal than the one that is actually weak. The Search Console performance reports combined with careful query analysis is the diagnostic instrument that enables precision.

Operator Leverage Points at the Ranking Stage

The leverage points for operators at the ranking stage break across the major signal categories: content relevance, authority, user experience, and personalization. Each category has interventions that act on it directly and other interventions that affect it indirectly through related signals.

Content relevance leverage points include topical depth investment, semantic coverage expansion, query intent matching, and structural optimization that surfaces topical signals in high-weight positions. Topical depth produces the comprehensive coverage that the relevance evaluation rewards. Semantic coverage expansion produces the related-concept signal that lifts pages above thin keyword-targeted content. Query intent matching aligns content type with the queries the page targets. Structural optimization places the topical signal where the system weights it most heavily.

Authority leverage points include link acquisition through editorial placement, topical authority development through cluster depth, credibility infrastructure including author identification and citation patterns, and domain history that compounds over multi-year horizons. The leverage point operates on different timescales than relevance interventions. Content relevance changes can move ranking within weeks. Authority changes produce ranking effects that take months to fully manifest as the signals propagate through the system.

User experience leverage points include Core Web Vitals optimization, mobile usability improvements, interstitial removal, and server performance tuning. The interventions are technical and produce measurable improvements in CrUX data within weeks of implementation. The ranking effect of the user experience improvements is usually a tiebreaker rather than a primary mover, which means operators should treat user experience as foundational support for the other signals rather than as a primary ranking strategy.

Personalization is not directly a leverage point because personalization signals come from the user side rather than the operator side. The operator’s leverage is to ensure ranking checks control for personalization so the diagnostic data accurately reflects how the page ranks for typical users. Operators who design content to perform across personalization variations rather than optimizing for their own personalized view produce more representative ranking outcomes.

The diagnostic discipline ties the leverage points together. Operators who can identify which signal category is the binding constraint for their target queries apply the right intervention the first time. Operators who cannot diagnose specifically often spend months on generic SEO improvements that do not address the actual weak signal. The Search Console performance reports, the ranking distribution analysis across queries, and the competitive landscape analysis are the diagnostic instruments that enable precision.

For more on the operational disciplines that support ranking-stage work, the Content discipline covers the content-side architecture that produces relevance and topical authority signals, and the Credibility discipline covers the authority-side architecture.

Verdict

The ranking stage is the calculation layer of the Google Search pipeline. The function scores indexed pages against queries in real time, drawing on hundreds of signals weighted dynamically based on query type and topical landscape. The output is an ordered list of URLs that the serving stage transforms into the visible search results page. Operators who understand the signal categories can match interventions to the actual ranking gap. Operators who treat ranking as one undifferentiated activity often optimize for the wrong signal.

Content relevance is the first major signal category. The evaluation measures how well the page’s content matches the query, considering term frequency, semantic relevance, topical coverage, query intent alignment, and the page’s relationship to other indexed content on the same topic. Pages that comprehensively address topics with substantive depth and original perspective produce stronger relevance signals than pages that paraphrase what is already in the index.

Authority signals form the second category. The signals draw on the link graph, domain authority, topical authority, page-level credibility markers, and domain history. The link graph contribution is one input among several rather than the dominant factor, and topical authority can let smaller domains outrank higher-authority competitors on specific topics where the smaller domain has accumulated specialized credibility. Authority changes propagate over months, not weeks.

User experience signals form the third category. Core Web Vitals, mobile usability, interstitial behavior, and HTTPS implementation all contribute. The signals are necessary but not sufficient for competitive ranking; pages with poor user experience lose position even when other signals are strong, but pages with excellent user experience need the other signals to actually rank well.

Personalization adjusts the calculation for individual searchers based on location, search history, device type, query context, and temporal dimension. The layer means that two searchers entering the same query simultaneously can see different ranked results. Operators need to control for personalization during ranking checks to get representative diagnostic data.

The failure patterns at the ranking stage are diagnosable. Insufficient relevance, insufficient authority, topical authority gaps, user experience drag, personalization confusion, and freshness mismatch each have specific signatures and specific fixes. Operators who diagnose specifically apply the right intervention the first time. Operators who do not often spend months on generic SEO improvements that cannot move ranking because the changes target the wrong signal category.

The leverage points include topical depth investment, link acquisition through editorial placement, Core Web Vitals optimization, and the diagnostic discipline that ties them together. The interventions operate on different timescales: relevance changes can move ranking in weeks, authority changes take months, user experience changes produce measurable effects within weeks but as tiebreakers rather than primary movers.

For the broader pipeline framework, the pillar guide on How Google Search Works covers all four stages. The sibling articles on How Google Crawls the Web, How Google Indexes Pages, and How Google Renders the SERP cover the stages that surround the ranking calculation in the pipeline.

Frequently Asked Questions

What are the most important Google ranking signals?

Ranking signals break across four major categories: content relevance (how well the page matches the query), authority signals (link graph, domain authority, topical authority, and credibility markers), user experience (Core Web Vitals, mobile usability, interstitial behavior), and personalization (location, search history, device type, query context). The signals do not contribute equally; their weighting shifts based on query type, topical landscape, and ongoing algorithm updates. The binding constraint for any specific page-query combination is what determines which intervention will actually move ranking position.

How long does it take to see ranking improvements after making changes?

The timescale depends on what was changed. Content relevance changes can move ranking within weeks as Google reprocesses the page and re-evaluates against queries. Authority changes from new backlinks take months to fully manifest as the link graph signals propagate. User experience changes produce measurable effects within weeks once the Core Web Vitals data updates in CrUX. Topical authority development is a multi-year horizon investment that compounds slowly.

Why is my page indexed but not ranking?

Indexed but not ranking means the page is in the index but losing the ranking calculation against competitors. The diagnosis depends on which signal is the binding constraint. Pages with weak content relevance need content-side work to address the target query more directly. Pages with weak authority need link acquisition and topical authority development. Pages with user experience drag need technical improvements to Core Web Vitals and mobile usability. The Search Console performance report combined with competitive analysis identifies which signal category is actually weak.

Does domain authority matter more than topical authority?

No. Domain authority is the broad credibility signal, but topical authority is the specific credibility signal for a domain’s expertise in particular subject areas. A high domain authority site without topical authority for a specific query may underperform a lower domain authority site with strong topical authority on that exact topic. The interaction is what makes topical clustering and content depth strategies effective ranking interventions even on smaller domains.

How does Google determine query intent?

The system classifies queries into intent types: informational queries seeking knowledge, navigational queries seeking a specific site, transactional queries seeking to complete an action, and commercial investigation queries seeking to evaluate options. Each intent type favors different content patterns. The classification draws on the query construction, the historical behavior of users searching the query, and the content patterns that produce the best engagement outcomes for the query. Operators who match content type to query intent produce stronger relevance signals than operators who produce generic content regardless of intent.

Are Core Web Vitals a major ranking factor?

Core Web Vitals contribute to ranking but as supporting signals rather than dominant factors. Pages with poor Core Web Vitals lose ranking position even when other signals are strong. Pages with excellent Core Web Vitals do not automatically rank well; they remove a barrier to ranking but need the other signals to actually compete. Treating Core Web Vitals as a sufficient ranking strategy by itself produces disappointment. Treating it as foundational support for the other ranking signals produces compounding effects.

Why do my rankings look different from what tracking tools show?

Rankings are personalized based on location, search history, device type, and other contextual signals. The same query can produce different ranked results for different users. Operators who check rankings through their own browsers see personalized results that include their own browsing history, which often inflates apparent ranking position. Neutral ranking tools, location-spoofed sessions, or incognito browsing with controlled location settings produce more representative ranking data than personalized searches.

Can I rank without backlinks?

For low-competition queries on topics where the publishing domain has accumulated topical authority, ranking without significant backlinks is possible. Long-tail queries, niche topics, and specialty areas often rank based on content relevance and topical authority signals more than link graph signals. Competitive queries in established topical areas almost always require backlink support because the existing competitors have authority signals that pure content quality cannot match.

How does freshness affect ranking?

Freshness affects ranking based on the query’s freshness sensitivity. News queries, current event queries, and rapidly evolving topical areas weight freshness heavily, with recent content often outranking older content even when authority signals favor the older pages. Evergreen queries on stable topics weight freshness lightly, with established comprehensive references often outranking newer surface-level coverage. The system classifies queries by freshness sensitivity and adjusts ranking weights accordingly.