How Google Search Works: The Complete Guide for SEO in 2026

AI Summary

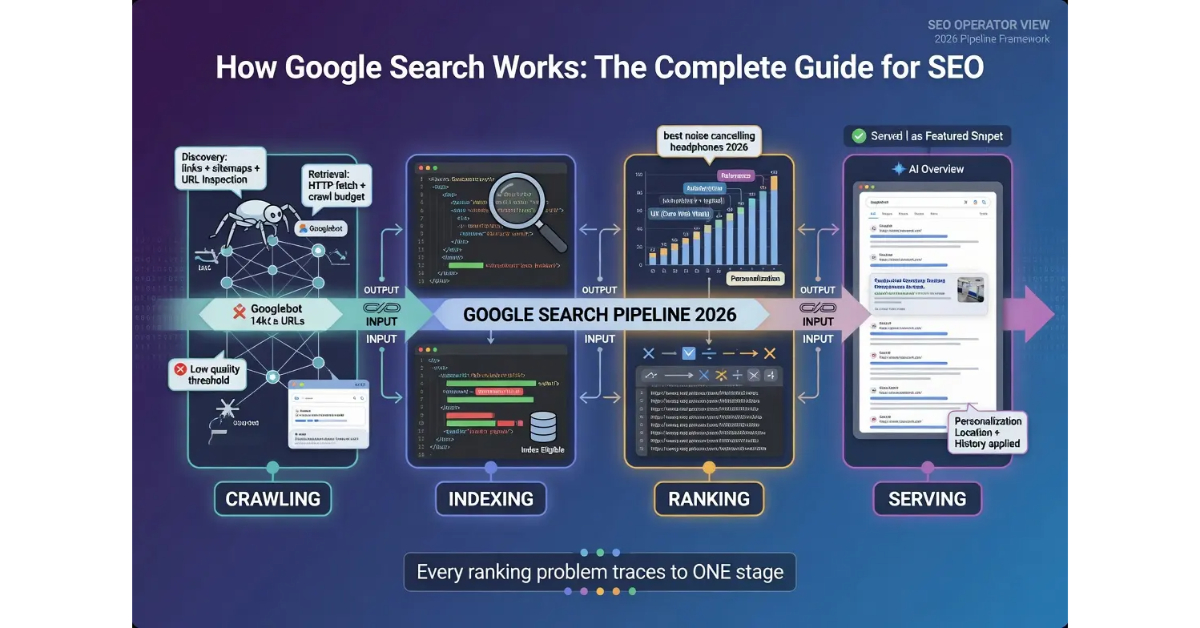

What is the Google Search pipeline? Google Search runs on a four-stage pipeline: crawling, indexing, ranking, and serving. Each stage is a separate system with separate functions, separate failure modes, and separate intervention points for SEO operators. Understanding the pipeline is the difference between SEO that produces results and SEO that produces theories.

What it is and who it is for: The pipeline framework matters for any operator who needs to diagnose why pages are not ranking, decide where to invest SEO effort, or understand how technical and content interventions actually flow through Google’s systems. The four-stage model replaces the vague “Google ranks pages” mental model with a precise system that can be measured, debugged, and optimized.

The rule: Every page that ranks in Google has passed through all four stages. Every ranking problem traces to one of the four stages. Operators who diagnose at the wrong stage waste effort on interventions that cannot fix the actual failure point.

Table of Contents

The Four-Stage Pipeline

Google Search is not one system. It is four systems connected in sequence, and each system performs a specific function that the next system depends on. The four stages are crawling, indexing, ranking, and serving. Every page that appears in Google search results has passed through all four stages. Every page that does not appear has failed at one of the four stages, and the stage at which the failure occurred determines what intervention can fix it.

The crawling stage is the discovery layer. Googlebot finds URLs through links, sitemaps, and direct submission, then fetches the content of each URL it finds. The crawler does not evaluate content quality, does not decide rankings, and does not make decisions about what users will eventually see. The crawler’s job is to find URLs and retrieve their content, then hand the content off to the indexing system.

The indexing stage is the evaluation layer. The indexing system analyzes the crawled content, parses the HTML, processes structured data, evaluates quality signals, and decides whether the page belongs in the index. Indexed pages are stored in Google’s database and become eligible to rank for queries. Pages that fail evaluation are crawled but not indexed, which is a distinct outcome from not being crawled at all.

The ranking stage is the calculation layer. When a user submits a query, Google’s ranking systems calculate which indexed pages best answer the query and in what order they should appear. The ranking calculation runs in real time for every query, drawing on hundreds of signals to score the relevance and authority of each potentially relevant page. The output of the ranking stage is an ordered list of URLs that the next stage will render.

The serving stage is the rendering layer. The ranked list of URLs gets transformed into the actual search results page that users see. The serving layer makes decisions about which result formats to use, which features to display, which content elements to extract from each page, and how to apply personalization signals. Two users searching the same query at the same moment can see different served results based on personalization applied at this final stage.

The four stages run as a sequential pipeline because each stage depends on the output of the previous stage. A page that is not crawled cannot be indexed. A page that is not indexed cannot be ranked. A page that is not ranked cannot be served. The dependency chain means that a failure at any earlier stage prevents any later stage from running for that page, and operators who try to fix a stage that is not the actual failure point will not see improvement regardless of how much effort they invest.

The shorthand version: Google Search is a four-stage sequential pipeline where each stage performs a distinct function. SEO interventions operate at specific stages, and operators who match interventions to stages produce results that operators who treat the system as one undifferentiated whole cannot reliably produce.

The Crawling Stage

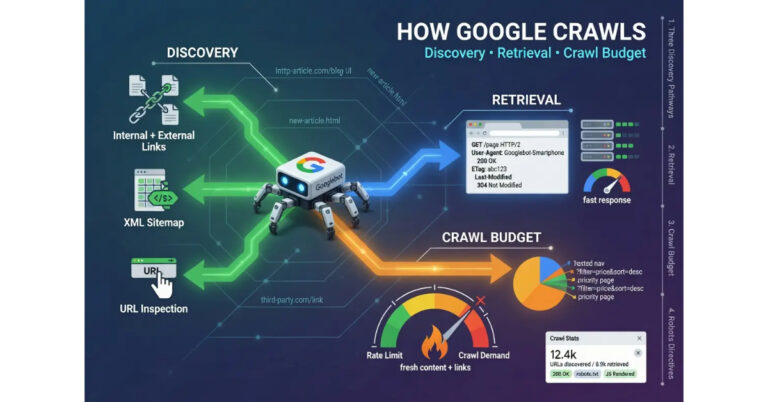

The crawling stage is where Googlebot discovers URLs and fetches their content. The stage has two distinct functions: discovery and retrieval. Discovery is how Google finds URLs to crawl. Retrieval is how Google fetches the content of URLs once they have been discovered. Both functions matter for SEO, and operators who think of crawling as one undifferentiated activity miss the leverage points that exist at each function.

Discovery happens through three primary pathways. The first pathway is internal and external linking. Googlebot follows links from pages it already knows about to find new URLs. A new article published on an established site gets discovered when Googlebot crawls a page that links to the new article. The second pathway is sitemap submission. Operators who submit XML sitemaps through Google Search Console give Googlebot a direct list of URLs to crawl, which accelerates discovery for new content that may not yet have inbound links. The third pathway is direct submission through the URL Inspection tool, which forces Googlebot to crawl a specific URL on demand.

The honest read on discovery is that linking remains the most reliable mechanism for sustained discovery on an established site. Sitemaps work, but Google treats them as hints rather than instructions. Direct submission works for individual URLs but does not scale. Internal linking from existing indexed content is what produces the consistent crawl patterns that let new content get discovered without manual intervention for every page.

Retrieval is the actual fetch operation. Googlebot makes an HTTP request to the URL, receives a response, and processes whatever the server returns. The retrieval function depends on server availability, response codes, content delivery speed, and the presence or absence of directives that tell Googlebot how to handle the URL. A site with intermittent server availability will have inconsistent retrieval even when discovery is working perfectly.

The crawl budget concept becomes relevant at the retrieval function. Google allocates a finite amount of crawl resources to each site based on the site’s authority, the freshness of its content, and the technical efficiency of its server responses. Sites with high crawl budget get crawled deeply and frequently. Sites with low crawl budget get crawled shallowly and infrequently. The budget is not visible directly but shows up in Google Search Console as the volume of crawl requests Google makes against the site over time.

The crawling stage produces specific outputs that the next stage depends on. Each successful crawl delivers the page content, the response headers, and any directives that affect how the indexing system should treat the page. Crawl directives include the robots meta tag, X-Robots-Tag headers, canonical link references, and hreflang annotations. Operators who use these directives correctly shape what happens at the next stage. Operators who use them incorrectly create indexing failures that are invisible at the crawl stage but block pages from progressing through the pipeline.

For more on the operational discipline that supports crawl optimization, the tier article on How Google Crawls the Web covers the deeper mechanics. The sibling articles on How Google Indexes Pages, How Google Ranks Search Results, and How Google Renders the SERP cover the other stages.

The Indexing Stage

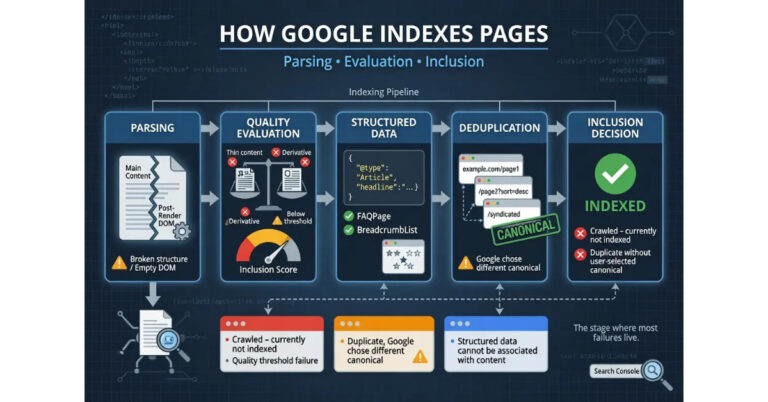

The indexing stage is where Google decides whether a crawled page belongs in the search index. The stage has multiple distinct functions that operate in sequence: parsing the crawled content, evaluating quality signals, processing structured data, deduplicating against existing indexed content, and making the final decision about inclusion. Each function can produce a different failure mode, and operators who treat indexing as a single binary outcome miss the precision required to diagnose indexing problems accurately.

Parsing is the first function. The indexing system takes the raw HTML delivered by the crawler and extracts the meaningful content from it. The parser identifies the main content area, the navigation elements, the boilerplate sections, and the structured data markup. The parser handles JavaScript-rendered content through a separate rendering layer that executes the page’s JavaScript and produces the post-render DOM. Pages that depend on JavaScript for primary content delivery go through this rendering layer, which adds latency and can produce parsing differences from server-rendered content.

Quality evaluation is the function where most indexing failures actually occur. The indexing system evaluates the parsed content against signals including content uniqueness, depth of coverage, technical health, internal linking patterns, and the perceived value of adding the page to the index relative to similar content already there. Pages that score above the threshold get indexed. Pages that score below the threshold get the “Crawled – currently not indexed” status that operators see in Google Search Console. The threshold is not fixed; it shifts based on the topical landscape, the volume of similar content already indexed, and the authority of the publishing domain.

Structured data processing happens in parallel with quality evaluation. The indexing system reads the JSON-LD, microdata, and RDFa markup on the page and uses it to enrich the indexed record with structured information about entities, relationships, and content types. Pages with valid structured data become eligible for rich result formats at the serving stage. Pages with broken or absent structured data still get indexed but do not gain the rich result eligibility.

Deduplication is the function that decides what to do when multiple URLs contain substantially similar content. The indexing system identifies the canonical version among duplicates and indexes that version while excluding the others. Canonical decisions consider explicit canonical link annotations, internal linking patterns, redirect chains, and content similarity calculations. Operators who manage canonicalization correctly preserve the index slot for their preferred URL. Operators who do not lose index slots to whichever URL the system selects.

The final decision combines the outputs of all the previous functions into a single binary outcome: index or do not index. Indexed pages enter the database and become eligible for ranking. Non-indexed pages are stored as crawled but excluded, with the exclusion reason logged in Google Search Console for operator review. The exclusion reasons fall into recognizable categories: duplicate content, low quality signals, technical directives blocking indexing, and the catch-all “crawled – currently not indexed” status that indicates the page reached evaluation but did not meet the threshold for inclusion.

The honest read on indexing is that it is the stage where many SEO problems actually live, but operators often try to fix them at the wrong stage. A page that is not ranking might be indexed but ranking poorly, which is a ranking-stage problem. A page that is not ranking might be indexed but excluded from the served results for a specific query, which is a serving-stage problem. A page that is not ranking might not be indexed at all, which is an indexing-stage problem. Each diagnosis points to a different intervention, and confusing them produces wasted effort.

For the deeper mechanics of the indexing decision, the tier article on How Google Indexes Pages covers the operational discipline.

The Ranking Stage

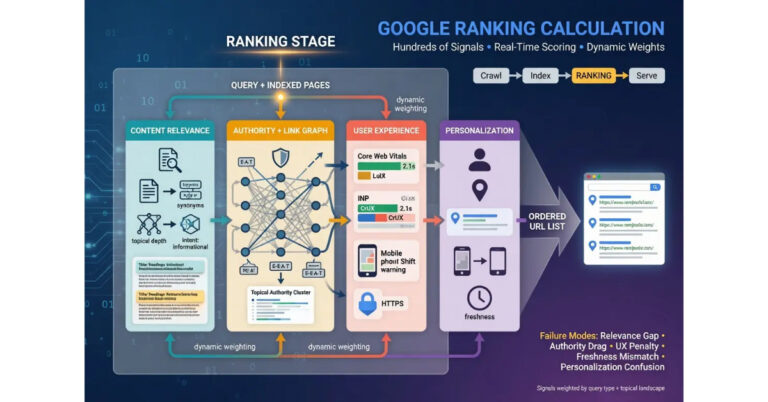

The ranking stage is where Google calculates which indexed pages best answer a given query and in what order they should appear. The calculation runs in real time for every query, not in advance. Each search Google receives triggers a fresh ranking calculation against the indexed pages that match the query terms, with hundreds of signals contributing to the final score for each candidate page. The output is an ordered list of URLs that the serving stage will render into the actual results page.

The signals that drive ranking break across several categories. Content relevance signals measure how well the indexed page’s content matches the query. Authority signals measure the credibility of the page and the domain it sits on. User experience signals measure how usable the page is for searchers who land on it. Personalization signals adjust the calculation based on the searcher’s location, search history, and other contextual factors. The signals do not contribute equally; their weighting shifts based on the query type, the topical landscape, and ongoing algorithm updates.

Content relevance is calculated through analysis of the indexed page’s content against the query terms. The system considers term frequency, semantic relevance, topical coverage, and the relationship between the page’s content and other indexed content on the same topic. Pages that comprehensively cover a topic with substantive depth tend to score higher than pages that touch the topic shallowly. The relevance calculation is not just keyword matching; it incorporates the system’s understanding of what the query is actually asking and which content best answers it.

Authority signals draw on the link graph, the publishing domain’s history, the topical authority the domain has accumulated, and the credibility markers present on the page itself. The link graph contribution is what most operators think of as authority, but it is one input among several. A page on a domain with strong topical authority on the query subject can outrank pages on higher-authority domains that lack the topical specialization. The interaction between domain-level and topic-level authority is one of the dimensions that makes ranking calculation more complex than the simple “high DA wins” model some operators carry.

User experience signals include page speed, mobile usability, layout stability, intrusive interstitial behavior, and the broader Core Web Vitals framework. The signals do not directly determine ranking on their own but contribute to the overall score and can act as tiebreakers when other signals are roughly equivalent. Operators who treat user experience as separate from SEO miss that the experience signals feed directly into the ranking calculation.

Personalization is the final layer that adjusts the calculation for the specific searcher. Location signals shift results based on geographic relevance. Search history can adjust results based on previously visited domains or topics the searcher has shown interest in. Device type can affect the calculation when results vary in usefulness across desktop and mobile. The personalization layer means that two searchers entering the same query at the same moment can see different ranked results, which is why ranking position checks should use neutral browsers, location-spoofed sessions, or specialized rank-tracking tools rather than personalized searches.

The ranking stage produces an ordered list of URLs that the serving stage transforms into the visible results page. The ranking stage does not decide what the results page looks like, which features appear, or how the rendered results are formatted. Those decisions happen at the serving stage, which uses the ranked list as input but applies its own logic on top.

For the deeper mechanics of how ranking signals interact, the tier article on How Google Ranks Search Results covers the operational discipline.

The Serving Stage

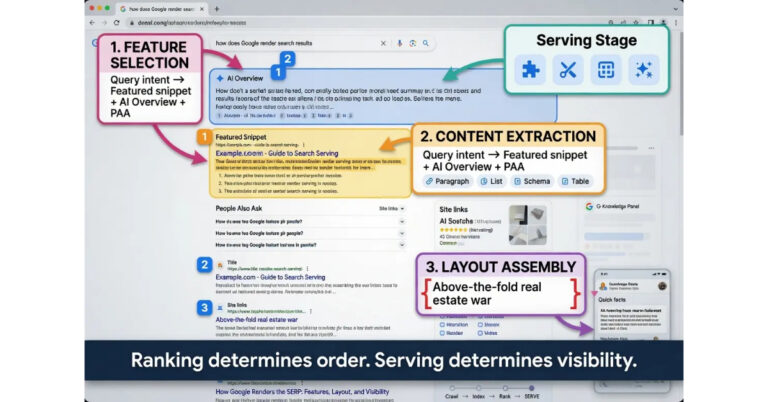

The serving stage is where the ranked list of URLs gets transformed into the actual search results page that users see. The stage is often invisible to operators because it happens between the ranking calculation and the rendered page, but it has its own logic, its own decisions, and its own failure modes that affect whether ranked pages actually appear the way operators expect.

The first function at the serving stage is feature selection. Google decides which SERP features to show for the query: featured snippets, People Also Ask boxes, image packs, video carousels, knowledge panels, local packs, AI Overviews, and dozens of other formats. The feature selection draws on query intent classification, the indexed content available to populate the features, and ongoing experimentation with which features produce the best user outcomes for the query type. A page that ranks number three organically can still appear above the organic results if it gets pulled into a featured snippet at the serving stage.

The second function is content extraction. When a page appears in a SERP feature, the serving system extracts specific content elements from the page to populate the feature. The extracted elements come from the indexed page content, the structured data markup, and sometimes the page’s metadata. Featured snippets pull a specific paragraph, list, or table. Rich results pull structured data. People Also Ask answers pull short content blocks that match the question intent. The extraction logic is what determines whether a page benefits from feature eligibility or just gets the basic blue link.

The third function is layout assembly. The serving system assembles the selected features and ranked organic results into the final page layout. Layout decisions include which features appear above the organic results, which appear interspersed with them, and how many organic results appear above the fold versus below. The layout is not fixed; it varies by query, by device, by user signals, and by ongoing experimentation with what produces the best user experience for the specific query.

The fourth function is personalization. The serving system applies personalization signals at the final rendering layer. Location signals can shift which businesses appear in local packs, which news sources appear for current events queries, and which content formats get prioritized for the searcher’s region. Search history can subtly adjust which results get visual prominence even when the underlying ranking order does not change. Device type affects layout decisions because mobile and desktop SERPs have different real estate constraints.

The serving stage is where SERP features become the leverage point that pure ranking optimization misses. A page ranking number five organically can drive more clicks than a page ranking number two if the number five page gets pulled into a featured snippet while the number two page does not. The serving stage decisions about feature eligibility are partly driven by the ranking stage but include their own evaluation that pages can fail or pass independently.

The honest read on serving is that operators who understand the stage can target SERP features deliberately rather than treating them as accidental wins. Structured data investment becomes meaningful at the serving stage because it determines rich result eligibility. Content structure becomes meaningful because featured snippet extraction prefers specific structural patterns. The same content optimized for the ranking stage but not the serving stage produces ranking without visibility, while content optimized for both produces ranking with feature placement that compounds the visibility.

For the deeper mechanics of how the SERP gets rendered, the tier article on How Google Renders the SERP covers the operational discipline.

Where Each Stage Fails

Each stage of the pipeline has distinct failure modes, and operators who diagnose at the wrong stage waste effort on interventions that cannot fix the actual problem. Naming the failure modes by stage clarifies what to investigate when ranking outcomes do not match expectations.

Crawling-stage failures break across two categories. Discovery failures happen when Googlebot cannot find URLs to crawl. The symptoms include new pages that never get crawled, sitemap URLs that show as discovered but not crawled, and pages buried deep in site architecture without inbound internal links. Retrieval failures happen when Googlebot finds the URL but cannot fetch the content. The symptoms include server errors during crawl attempts, robots.txt directives blocking the URL, and pages that crawl successfully but with incomplete content because the server response was slow or partial.

Indexing-stage failures break across several categories. Quality threshold failures appear in Google Search Console as “Crawled – currently not indexed” status, meaning the page reached evaluation but did not score above the inclusion threshold. Duplicate content failures appear as “Duplicate without user-selected canonical” or similar canonical confusion statuses, meaning the page was deduplicated against another URL the system selected as canonical. Technical directive failures appear when robots meta tags or X-Robots-Tag headers tell the indexing system to exclude the page even though crawling succeeded.

Ranking-stage failures happen when pages are indexed but do not appear in competitive ranking positions for their target queries. The failures break across several patterns. Insufficient relevance signals produce pages that rank for unrelated queries but not the queries the operator wants. Insufficient authority signals produce pages that match the query intent but get outranked by higher-authority competitors. Topical authority gaps produce pages on domains that lack established credibility in the query’s subject area. User experience failures produce pages that have all the relevance and authority signals but lose ranking position because of poor Core Web Vitals or mobile usability issues.

Serving-stage failures happen when pages rank well but do not appear with the visibility operators expect. The failures include featured snippet exclusion despite ranking well organically, rich result eligibility loss despite valid structured data, AI Overview source omission despite topical relevance, and local pack exclusion despite location relevance. Each of these is a serving-stage decision that operates on top of the ranking output and applies its own evaluation logic.

The diagnostic discipline that operators need is to identify the failure stage before designing the intervention. A page not appearing in search has a different fix depending on whether the failure is at crawling (improve discoverability and server response), indexing (improve content quality, fix canonical issues, audit directives), ranking (build relevance, authority, and user experience signals), or serving (target SERP features, structured data, and feature-specific optimization). Operators who skip the diagnostic step and apply generic SEO interventions often spend months on the wrong fix.

Operator Leverage Points by Stage

The leverage points for SEO operators differ across the four stages, and matching interventions to stages is what produces results that diffuse SEO effort cannot match. Each stage has interventions that act on it directly and other interventions that affect it indirectly through the upstream stages.

Crawling-stage leverage points include sitemap maintenance, internal link architecture, server response optimization, robots.txt configuration, and crawl budget management for larger sites. Sitemap maintenance ensures Googlebot has the URL list it needs for discovery. Internal linking creates the discovery pathways that produce sustained crawl coverage without manual submission. Server response optimization improves the retrieval function and protects crawl budget from being consumed by slow responses. Robots.txt configuration prevents crawl waste on URLs that should not be retrieved at all.

Indexing-stage leverage points include content quality investment, structured data implementation, canonical management, and indexability directive auditing. Content quality is the dominant lever because the quality threshold is what most “Crawled – currently not indexed” failures hit. Structured data implementation determines rich result eligibility at the serving stage but operates at the indexing stage where the markup gets processed. Canonical management protects the index slot for the operator’s preferred URL when duplicate or similar content exists. Indexability directive auditing catches the meta robots tags and X-Robots-Tag headers that block indexing even when content quality would otherwise pass.

Ranking-stage leverage points include on-page optimization, internal linking for topical authority, backlink architecture, Core Web Vitals optimization, and topical depth investment. On-page optimization is what produces the relevance signals the ranking calculation evaluates. Internal linking for topical authority concentrates equity on the pages that need to rank for competitive queries. Backlink architecture produces the external authority signals that the ranking calculation weights heavily. Core Web Vitals optimization addresses the user experience signals that affect ranking calculation directly. Topical depth investment is the long-horizon lever that builds the domain-level credibility that lets specific pages rank in their topic clusters.

Serving-stage leverage points include structured data for rich result targeting, content structure optimization for featured snippet extraction, schema-driven entity association for knowledge graph eligibility, and click-through rate optimization through title and meta description tuning. Structured data targeting produces rich result eligibility that compounds organic ranking visibility. Content structure optimization makes pages eligible for featured snippet extraction. Schema-driven entity association strengthens knowledge graph connections that affect AI Overview source selection. CTR optimization affects the click-through patterns that feed back into ranking calculations over time.

The operator who works across all four stages produces results that operators working at one or two stages cannot match. The pipeline framework is what enables this multi-stage approach. Operators who think of SEO as one undifferentiated activity miss the precision that the four-stage decomposition enables. The framework is the intervention design discipline that separates effective SEO programs from generic ones.

For more on the operational discipline that ties the four stages together, the Content discipline covers the content-side architecture and the Credibility discipline covers the authority-side architecture.

Verdict

Google Search runs on a four-stage pipeline: crawling, indexing, ranking, and serving. The stages run in sequence, each depending on the output of the previous stage, and each performing a distinct function with distinct failure modes. Operators who understand the pipeline can diagnose ranking problems precisely and apply interventions at the stage where they actually act. Operators who treat search as one undifferentiated activity waste effort on interventions that cannot fix the actual failure point.

The crawling stage is discovery and retrieval. Googlebot finds URLs and fetches their content. Discovery happens through linking, sitemaps, and direct submission. Retrieval depends on server health and crawl budget allocation. Crawl-stage failures produce pages that never make it into Google’s pipeline at all, and the fix is technical and architectural rather than content-focused.

The indexing stage is parsing, evaluation, structured data processing, deduplication, and the inclusion decision. Most “Crawled – currently not indexed” failures hit at the quality threshold, and content quality is the dominant lever. Canonical management, structured data, and indexability directives all act at this stage. Indexing-stage failures produce pages that cannot rank because they are not in the index to begin with.

The ranking stage is the real-time calculation that scores indexed pages against queries. Hundreds of signals contribute, weighted dynamically based on query type and topical landscape. Content relevance, authority signals, user experience signals, and personalization all feed into the calculation. Ranking-stage failures produce pages that are indexed but cannot compete for the ranking positions operators want.

The serving stage is feature selection, content extraction, layout assembly, and final personalization. SERP features, rich results, AI Overviews, and local packs all live at this stage. Serving-stage decisions can elevate or suppress visibility independent of organic ranking position. Operators who target the serving stage deliberately produce visibility that pure ranking optimization misses.

The diagnostic discipline is to identify the failure stage before designing the intervention. The leverage discipline is to match interventions to stages and work across all four stages simultaneously. Operations that integrate the four-stage framework into their SEO workflow produce results that operations working at one or two stages cannot match.

For more on the operational disciplines that tie the four stages together, the Content discipline covers the content-side architecture and the Credibility discipline covers the authority-side architecture. The tier articles on How Google Crawls the Web, How Google Indexes Pages, How Google Ranks Search Results, and How Google Renders the SERP cover each stage in operational depth.

Frequently Asked Questions

What are the four stages of Google Search?

Crawling, indexing, ranking, and serving. Crawling discovers URLs and fetches their content. Indexing analyzes the content and stores it in Google’s database. Ranking calculates which indexed pages best answer a given query. Serving renders the final search results page that users see. Every page that appears in Google Search has passed through all four stages.

How does Google find new pages?

Google discovers new URLs primarily through three pathways: links from pages it already knows about, sitemaps submitted through Google Search Console, and direct URL submission via the URL Inspection tool. Internal linking from existing indexed content remains the most reliable discovery mechanism for new pages on an established site because it produces sustained crawl coverage without manual submission for every page.

Does crawling guarantee indexing?

No. Crawling and indexing are separate stages. Google crawls many more pages than it indexes. A page can be crawled, evaluated, and excluded from the index for reasons including low quality signals, duplicate content, technical directives blocking indexing, or the page failing to meet Google’s threshold for inclusion. Crawl status and index status must be checked separately in Google Search Console.

How long does it take for a new page to rank?

On an established site with consistent crawl frequency, indexing typically occurs within 24 to 72 hours of publication. Initial ranking position depends on the competitive density of the target query, the authority of the publishing domain, and the freshness evaluation period during which Google tests the page on relevant queries. Most new pages reach their stable ranking position between 4 and 12 weeks after indexing.

What is the difference between ranking and serving?

Ranking is the calculation of which indexed pages should appear for a given query and in what order. Serving is the rendering of that ranked list into the actual search results page, including the layout decisions about which features to show, which result formats to use, and which content elements to extract from each ranked page. Two users searching the same query at the same moment can see different served results based on personalization signals applied at the serving layer.

How does Google decide which pages to index?

Google evaluates each crawled page against quality signals including content uniqueness, depth of coverage, technical health, structured data validity, and the perceived value of adding the page to the index relative to similar content already indexed. Pages that fail this evaluation are crawled but not indexed, which appears in Google Search Console as the Crawled – currently not indexed status. The threshold shifts based on topical landscape and ongoing algorithm updates.

What is the difference between Googlebot and the indexing system?

Googlebot is the crawler that fetches web pages. The indexing system is a separate set of processes that analyze, parse, evaluate, and store content from crawled pages. Googlebot’s job ends when content is delivered to the indexing pipeline. Confusion between these two systems is common because both are casually referred to as Google, but they are distinct components with separate functions and separate failure modes.

How often does Google update its index?

The Google index updates continuously. Different segments update at different frequencies based on content freshness signals, source authority, and update patterns of the domain. News content can appear in the index within minutes of publication. Static informational content may go weeks or months between re-evaluations. Operators see this as the staggered refresh pattern in Google Search Console performance reports.

Can I influence which stage of the pipeline affects my pages?

Yes. Different SEO interventions act on different stages. Technical SEO and crawl budget management primarily affect the crawling stage. Content quality, structured data, and indexability directives affect the indexing stage. On-page optimization, internal linking, and backlink architecture affect the ranking stage. Click-through optimization, structured data for rich results, and search appearance configuration affect the serving stage. Effective SEO programs operate across all four stages simultaneously.