How Google Renders the SERP: Features, Layout, and Visibility

AI Summary

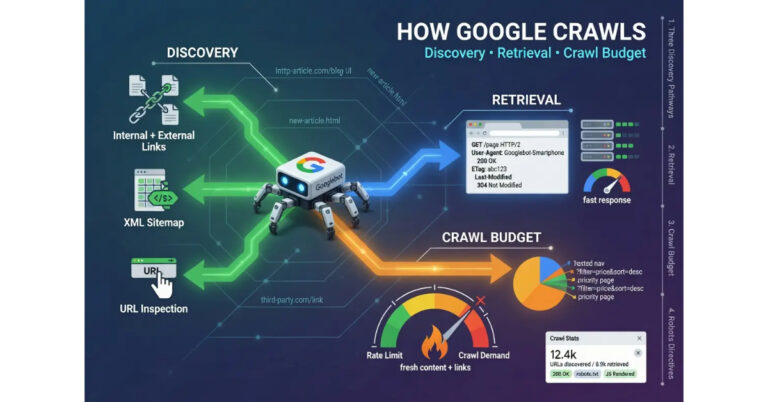

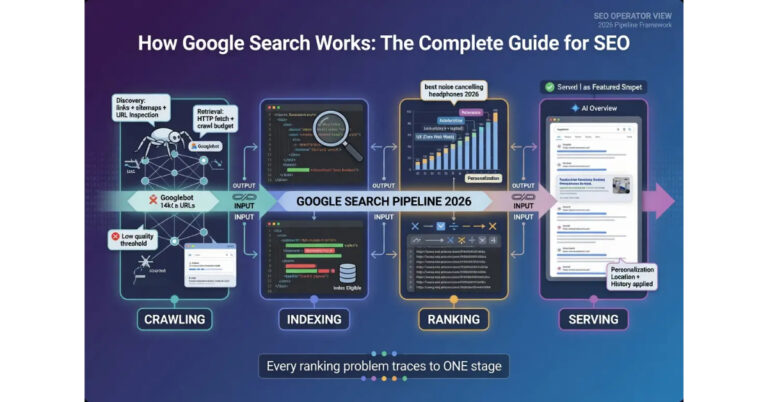

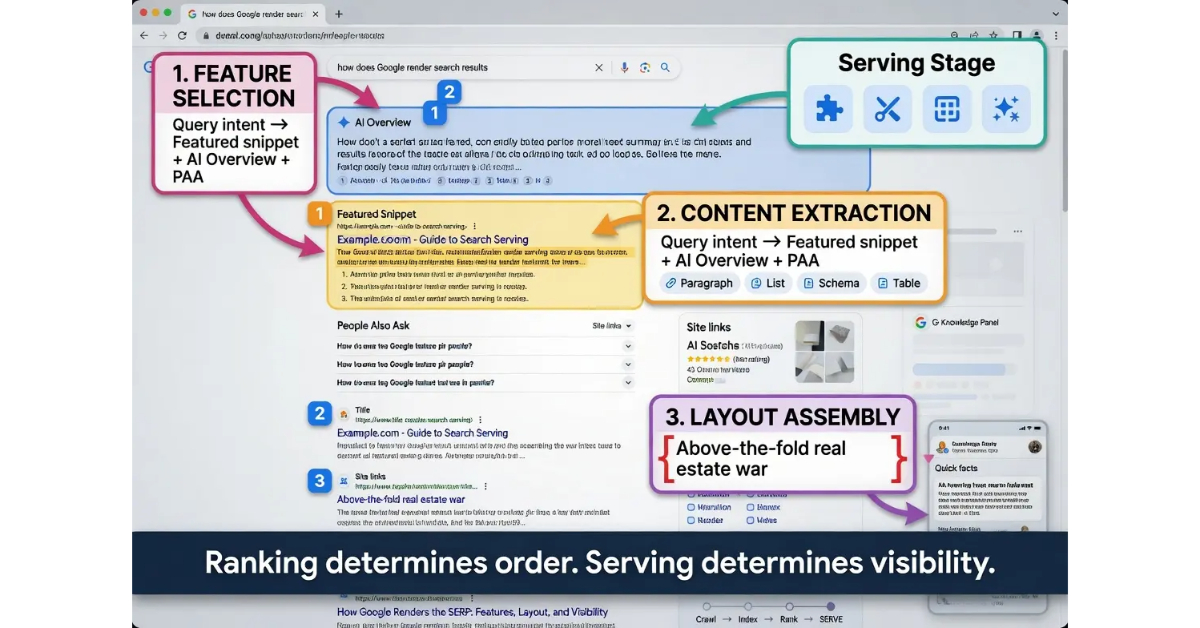

What is the serving stage? The serving stage is where Google transforms the ranked list of URLs from the ranking calculation into the actual search results page that users see. The stage runs four distinct functions: feature selection, content extraction, layout assembly, and final personalization. Each function has its own logic and its own decisions that operate on top of the ranking output.

What it is and who it is for: The serving stage matters for any operator who has pages that rank but do not produce the visibility expected from the ranking position. The stage is the fourth and final stage of the Google Search pipeline, and it is where SERP features, rich results, AI Overviews, and the click-through patterns that drive actual traffic are decided.

The rule: Ranking determines order. Serving determines visibility. A page ranking number five with a featured snippet often outperforms a page ranking number two without one. Operators who target the serving stage deliberately produce visibility that pure ranking optimization misses.

Table of Contents

Feature Selection and Query Intent

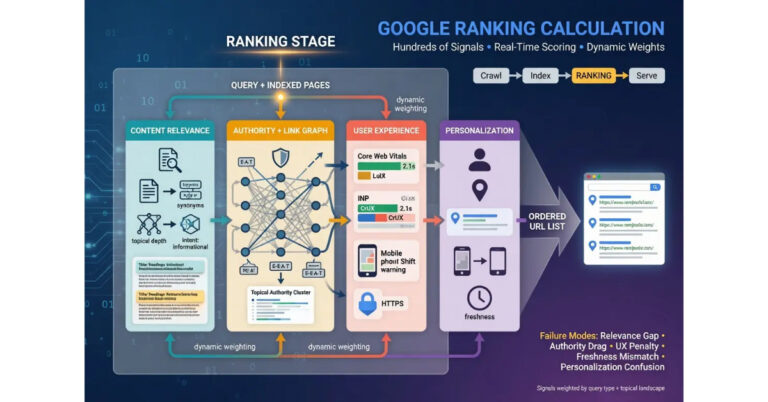

Feature selection is the first function at the serving stage. The system decides which SERP features to show for a specific query, drawing on query intent classification, the indexed content available to populate the features, and ongoing experimentation with which features produce the best user outcomes for the query type. The decision is independent of the ranking calculation in important ways. A page can rank number three organically and still appear above the organic results if the system selects it for a featured snippet, knowledge panel, or AI Overview citation.

The query intent classification is what drives most feature selection decisions. Informational queries tend to surface featured snippets, People Also Ask boxes, and AI Overviews because the user is seeking knowledge and the features deliver that knowledge directly. Navigational queries tend to surface knowledge panels and site link expansions because the user is seeking a specific site. Transactional queries tend to surface product carousels, local packs, and rich shopping results because the user is seeking to complete an action. Commercial investigation queries tend to surface comparison features, review summaries, and structured data-driven rich results because the user is evaluating options.

The feature ecosystem includes dozens of distinct formats. Featured snippets extract a paragraph, list, or table from a top-ranking page and display it above the organic results. People Also Ask shows related questions with expandable answers pulled from various indexed pages. Knowledge panels display structured information about entities, businesses, or concepts. Image packs surface relevant images from the index. Video carousels display video results from YouTube and other indexed video sources. Local packs surface nearby businesses for location-relevant queries. Top stories surface recent news content. Site links display navigation shortcuts under top-ranking results for navigational queries. Each feature has its own selection criteria and its own content extraction logic.

The selection function evaluates which features to show based on whether high-quality content exists to populate them. A query that would semantically warrant a featured snippet may not produce one if no indexed page has the structural pattern the snippet extraction needs. A query that would warrant a local pack may not produce one if no nearby businesses are indexed in the relevant category. The feature ecosystem is opportunity-driven, surfacing features when the content layer can support them rather than forcing features into every query.

The competitive dynamics at the feature selection function differ from organic ranking. The competition for featured snippets is among pages that have the structural pattern the extraction needs. The competition for AI Overview citations is among pages that produce the kind of definitive answer the generative system can use. The competition for People Also Ask answers is among pages with question-answer content patterns. Pages that win at organic ranking can lose at feature selection, and pages that win at feature selection can come from outside the top organic positions.

The honest read on feature selection is that it operates as a parallel competition to organic ranking. Operators who only optimize for organic position miss the feature layer entirely. Operators who target feature selection deliberately produce visibility that pure ranking optimization cannot match. The discipline is to identify which features are likely to appear for the operator’s target queries and design content to compete in those specific feature competitions, not just in the organic ranking competition.

For more on the upstream stages that produce the ranked URLs feature selection operates on, the tier article on How Google Ranks Search Results covers the ranking calculation. The pillar guide on How Google Search Works covers the four-stage pipeline.

Content Extraction for SERP Features

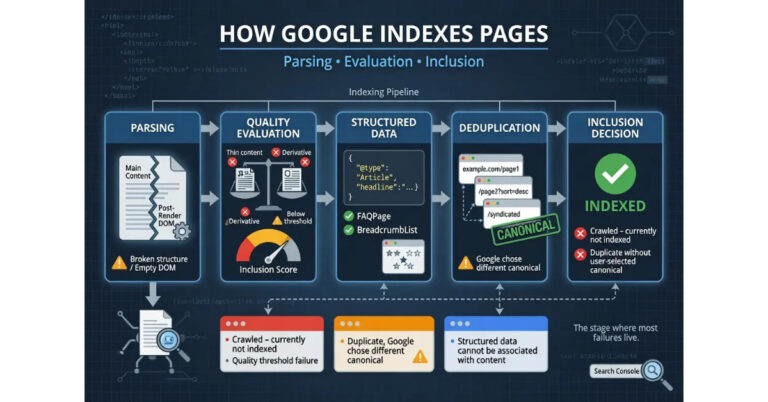

Content extraction is the function that pulls specific elements from indexed pages to populate selected features. When a page is chosen for a featured snippet, the system extracts a specific paragraph, list, or table from the page’s content. When a page contributes to People Also Ask, the system extracts a short answer block that matches the question intent. When a page provides a rich result, the system extracts the structured data and displays the relevant fields. The extraction logic determines whether a page benefits substantively from feature eligibility or just gets surface-level visibility.

Featured snippet extraction follows recognizable patterns. Paragraph snippets pull self-contained paragraphs that directly answer the query, usually 40-60 words long. List snippets pull ordered or unordered lists that match the query intent for procedural or enumerative content. Table snippets pull structured data tables for comparison or specification queries. The extraction prefers content that is self-contained and clearly delineated from the surrounding page rather than content that requires context from elsewhere on the page to make sense.

The structural patterns that support featured snippet extraction include clear question-answer formats, defined list structures with descriptive lead-in sentences, and tables with explicit headers. Content that buries the answer inside paragraphs without clear delineation may not extract cleanly. Content that uses unconventional formatting like custom CSS-styled lists may not be recognized as the structure type the extraction expects.

People Also Ask extraction operates on a different pattern. The function looks for content that answers specific question-format queries with concise, definitive responses. The structure that performs best is an explicit question framed in the page (often as a heading) followed by a direct answer in the first sentence or two of the section. Pages that have FAQ sections with question headings and short answer paragraphs are well-positioned for People Also Ask placement.

Rich result extraction depends on structured data markup. Article rich results pull headline, author, publication date, and image fields from Article schema. Product rich results pull price, availability, rating, and review count from Product schema. FAQ rich results pull question-answer pairs from FAQPage schema. Recipe rich results pull cooking time, ingredients, and instructions from Recipe schema. The extraction is direct: the structured data fields populate the rich result fields, and missing or invalid fields produce missing or degraded rich result displays.

AI Overview extraction operates on the most complex logic. The generative system synthesizes information from multiple indexed pages into a single answer, with citations to the source pages. The extraction is not pulling specific elements; it is using the indexed content as input to generate new text that summarizes what multiple sources say about the query. Pages that contribute to AI Overviews are pages that the generative system finds useful as substantive sources for the topic, which often correlates with topical authority and content depth more than with structural patterns.

The honest read on content extraction is that it rewards content designed for extraction. Pages with clear structural patterns, well-formed structured data, and self-contained answer blocks compete better at the extraction layer than pages with the same information delivered in less extractable forms. Operators who design content for the extraction layer produce feature placements that operators with the same information but worse structure cannot match.

Layout Assembly and Real Estate

Layout assembly is the function that combines the selected features and ranked organic results into the final page layout. The function makes decisions about which features appear above the organic results, which appear interspersed with them, and how many organic results appear above the fold versus below. The layout is not fixed; it varies by query, by device, by user signals, and by ongoing experimentation with what produces the best user experience for the specific query.

The above-the-fold real estate is the most contested layout space because it determines what users see without scrolling. SERP features routinely consume the top of the layout for queries where they appear, pushing organic results below the fold. A query with a featured snippet, People Also Ask, and AI Overview can push the first organic result far enough down the page that ranking number one organically produces less visibility than ranking number five with feature placement.

The mobile layout has different real estate constraints than desktop. Mobile screens are narrower, which means each layout element consumes more vertical space relative to the visible viewport. Features that fit comfortably on desktop above the fold can push organic results entirely below the fold on mobile. The layout decisions account for this by adjusting which features appear and how they are sized, but the fundamental constraint is that mobile users see fewer results without scrolling than desktop users.

The layout varies by query type in patterns operators can recognize. Local intent queries put the local pack high in the layout, often above any organic results. News queries put top stories prominently. Shopping queries put product carousels above organic results. Reference queries often put featured snippets and AI Overviews at the top. The variation means that a single domain’s organic ranking position has different visibility depending on which features the query type triggers.

The interspersed layout pattern places features between organic results rather than above them. People Also Ask boxes commonly appear after the second or third organic result. Image packs sometimes appear in the middle of the organic results. Site links extensions can expand under top-ranking results, pushing the next organic result down the page. Each interspersed feature consumes vertical space that affects the visibility of the organic results around it.

The dynamic nature of layout assembly is what makes pure ranking optimization insufficient. A page can hold its organic ranking position while its actual visibility on the SERP shifts dramatically based on which features the layout includes. Operators who track only ranking position miss the visibility variation that the layout layer produces. Operators who track click-through rate alongside ranking position see the layout effect because CTR responds to actual visibility rather than just position number.

The honest read on layout assembly is that it operates as a competition for above-the-fold real estate that includes but exceeds the organic ranking competition. Operators who target feature placement aggressively produce visibility that page-1 organic ranking alone cannot match. Operators who do not engage with the feature layer often see flat traffic despite holding ranking positions, because the layout has been redesigning around them in ways that compress organic visibility.

AI Overviews and Generative Results

AI Overviews are the generative result format that synthesizes information from multiple indexed sources into a single answer at the top of the SERP. The function operates differently from any earlier SERP feature because it does not pull a single page’s content for display; it generates new text that draws on multiple sources, with citations to the contributing pages. The format has become an increasingly prominent layout element since its introduction, and the operational dynamics around it differ from traditional ranking and feature competition.

The selection criteria for which queries produce AI Overviews are still evolving. The format appears most often on informational queries where the system can produce a substantive synthesis from multiple sources. Queries with strong commercial intent, queries with definitive single answers, and queries where the synthesis would not improve on existing SERP features tend not to trigger AI Overviews. The pattern is that AI Overviews appear when generative synthesis adds value beyond what existing features provide.

The contribution model for AI Overviews differs from other features. Pages that contribute to the synthesis are cited as sources, often with multiple sources contributing to a single AI Overview. The citations appear as small references that users can expand to see the contributing pages. The traffic implications are mixed: cited sources gain visibility through inclusion but may lose click-through traffic because the AI Overview answers the query directly.

The pages that get selected as AI Overview sources tend to share characteristics. Topical authority on the query subject is dominant. Content depth and substantive coverage matters because the generative system needs substantive material to synthesize. Structural clarity helps because the system can extract definitive statements more reliably from well-structured content. Authoritative sourcing within the content matters because the system tends to weight sources that cite their own evidence over sources that make unsupported claims.

The traffic effects of AI Overviews on the broader SERP are still being measured by the industry. Some queries see significant click-through compression because the AI Overview answers the query and users do not click through to source pages. Other queries see expanded traffic to cited sources because the citations function as endorsements that drive clicks specifically to the cited pages. The variation depends on query type, user intent, and how complete the AI Overview’s answer is.

The competitive dynamics for AI Overview citation are different from organic ranking competition. The system does not just select the top-ranking page. It selects pages that contribute substantively to the synthesis, which can include pages outside the top-ranking organic positions. Pages with strong topical authority on niche subjects often appear in AI Overviews for those subjects even when their organic ranking is not dominant.

The honest read on AI Overviews is that they represent a structural shift in how some queries surface content. Operators who treat AI Overviews as just another SERP feature miss the generative dynamics that produce them. Operators who understand that AI Overview citation is a different competition than organic ranking design content that competes effectively in both. The pattern most likely to produce AI Overview citations is substantive topical authority combined with well-structured definitive content that the generative system can use as source material.

Where Serving Actually Fails

Serving failures break across recognizable patterns, and operators who can identify the specific failure mode can apply the targeted intervention. The diagnosis matters because pages that rank well but produce disappointing visibility often look superficially similar across different failure types, but the fixes differ depending on which serving function actually broke.

The first failure pattern is featured snippet exclusion despite ranking. The page ranks in the top organic positions but does not get pulled into the featured snippet for the query, while a lower-ranking page does. The pattern shows up as queries where another page captures the snippet position despite the operator’s page having stronger overall signals. The root causes include content structure that does not match the snippet extraction patterns, missing definitive answer blocks, and burying the answer inside surrounding context that the extractor cannot parse cleanly. The fix is content-structure work: add explicit question-answer blocks, format definitive answers as self-contained paragraphs, use clear list structures for procedural content.

The second failure pattern is rich result eligibility loss. The page has structured data markup but does not appear with rich result formatting in the SERP. The pattern shows up in Search Console as eligible for rich results but not appearing, or as rich result errors that prevent eligibility. The root causes include structured data validation errors, markup that contradicts visible page content, missing required fields for the rich result type, and structured data that does not match the page’s actual content type. The fix is structured data hygiene: validate through the Rich Results Test, fix any errors that surface, ensure markup matches visible content, and add any required fields the markup is missing.

The third failure pattern is AI Overview source omission. The page is topically authoritative on the query subject but does not appear as an AI Overview source. The pattern shows up as AI Overviews on the operator’s target queries that cite competing sources without including the operator’s content. The root causes include weak topical authority signals on the specific query subject, content structure that makes definitive statements harder to extract, and absence of the citation patterns the generative system uses as quality signals. The fix is topical authority development combined with content structure work: build cluster depth on the topical area, structure content with definitive statements clearly delineated, cite primary sources within the content.

The fourth failure pattern is local pack exclusion despite location relevance. The business is location-relevant for the query but does not appear in the local pack while competing businesses do. The pattern shows up on Google Maps and local SERP queries where the business is geographically relevant but missing from the surfaced results. The root causes include missing or incomplete Google Business Profile, weak local citation signals, missing reviews, or geographic targeting issues. The fix is local SEO work: complete and verify the Google Business Profile, build local citations consistently, encourage and respond to reviews, ensure NAP (name, address, phone) consistency across the web.

The fifth failure pattern is People Also Ask absence. The page comprehensively covers a topic but does not contribute answers to People Also Ask boxes for related queries. The pattern shows up as queries with PAA boxes where the operator’s content is topically relevant but not cited. The root causes include lack of explicit question-format content, missing FAQ structure, and content that addresses topics descriptively rather than answering questions directly. The fix is content structure: add FAQ sections with question headings and direct answers, structure content around explicit questions users actually ask, frame topical coverage in question-answer patterns where appropriate.

The sixth failure pattern is the cannibalization pattern. Multiple pages on the same site compete for the same queries and split the SERP feature opportunities between them, with neither page winning the feature. The pattern shows up as multiple URLs from the operator’s site appearing in the organic results without any of them capturing features. The root causes include unintended topical overlap between pages, weak canonical signals between similar pages, and content structure that prevents Google from identifying the canonical authority on the topic. The fix is consolidation: merge overlapping pages, redirect duplicates onto the canonical URL, strengthen the topical authority signal of the consolidated page.

The honest read on serving failures is that the diagnosis determines the intervention. Operators who confuse featured snippet exclusion with rich result eligibility loss spend effort on the wrong layer. Operators who treat AI Overview omission as a ranking problem rather than a topical authority and content structure problem produce no improvement. The Search Console performance reports filtered by search appearance, the Rich Results Test, and the manual SERP audit are the diagnostic instruments that enable precision.

Operator Leverage Points at the Serving Stage

The leverage points for operators at the serving stage break across the major functions: feature targeting, structured data implementation, content structure for extraction, and topical authority for AI Overview eligibility. Each function has interventions that act on it directly and other interventions that affect it indirectly through related signals.

Feature targeting leverage points include identifying which features appear for target queries, designing content to compete in those specific feature competitions, and tracking feature placement separately from organic ranking. Operators who manually search their target queries and document which features appear can build a feature opportunity map that identifies which content investments produce the highest visibility. The targeting discipline is what separates operators who win at feature placement from operators who optimize blindly for ranking.

Structured data leverage points include systematic implementation of relevant schema types across the site, validation discipline through the Rich Results Test, and feature-specific markup that targets the rich result formats most likely to appear for the operator’s content type. The implementation work is technical but produces compounding visibility benefits as the structured data unlocks rich result eligibility across the indexed page base.

Content structure leverage points include explicit question-answer blocks for People Also Ask eligibility, well-formed list and table structures for featured snippet extraction, FAQ sections for FAQ rich results, and clear definitive statements for AI Overview citation. The structural work makes the same information more extractable, which converts the underlying content quality into surface-level SERP visibility.

Topical authority leverage points apply to AI Overview eligibility specifically. The interventions are the same as topical authority development for ranking but produce serving-stage benefits that extend beyond ranking position. Cluster depth, comprehensive coverage of subtopics, citation patterns within content, and substantive original analysis all contribute to the topical authority signal that AI Overviews use as input.

The diagnostic discipline ties the leverage points together. Operators who can identify which serving function is failing for their target queries apply the right intervention the first time. Operators who cannot diagnose specifically often spend months on generic SEO improvements that do not address the specific feature placement issue. The Search Console performance report’s search appearance filter is the diagnostic instrument that segments performance by feature type, which makes the failure modes visible.

For more on the operational disciplines that support serving-stage work, the Content discipline covers the content-side architecture and the Credibility discipline covers the authority-side architecture.

Verdict

The serving stage is the rendering layer of the Google Search pipeline. The function transforms the ranked URLs from the ranking calculation into the actual search results page that users see, running four distinct sub-functions: feature selection, content extraction, layout assembly, and final personalization. Operators who understand the stage can target feature placement deliberately. Operators who treat ranking as the final stage often see flat traffic despite holding ranking positions because the layout has redesigned around them in ways that compress organic visibility.

Feature selection decides which SERP features to show for a query, drawing on query intent classification and the indexed content available to populate features. The function operates as a parallel competition to organic ranking. Pages that win at organic ranking can lose at feature selection, and pages that win at feature selection can come from outside the top organic positions. Operators who target features deliberately produce visibility that pure ranking optimization cannot match.

Content extraction pulls specific elements from indexed pages to populate selected features. Featured snippets pull paragraphs, lists, and tables. People Also Ask pulls short answer blocks. Rich results pull structured data fields. AI Overviews synthesize new text from multiple sources. The extraction logic determines whether feature eligibility produces substantive visibility or just surface-level inclusion. Content designed for extraction competes better than content with the same information delivered in less extractable forms.

Layout assembly combines features and ranked results into the final page. The above-the-fold real estate is contested heavily, and feature placement routinely pushes organic results below the fold even on top organic positions. The layout varies by query type, device, and ongoing experimentation. Mobile layouts have different real estate constraints than desktop. The dynamic nature of layout assembly is what makes pure ranking optimization insufficient for sustained visibility.

AI Overviews represent a structural shift in how some queries surface content. The generative system synthesizes information from multiple sources and cites the contributing pages. The selection criteria favor topical authority, content depth, structural clarity, and authoritative sourcing within the content. AI Overview citation is a different competition than organic ranking, and operators who understand the difference design content that competes in both.

The failure patterns at the serving stage are diagnosable. Featured snippet exclusion, rich result eligibility loss, AI Overview source omission, local pack exclusion, People Also Ask absence, and cannibalization each have specific signatures and specific fixes. Operators who diagnose specifically apply the right intervention. Operators who do not often spend months on generic SEO improvements that cannot move serving outcomes because the changes target the wrong function.

The leverage points include feature targeting, structured data implementation, content structure for extraction, topical authority development, and the diagnostic discipline that ties them together. The interventions are specific to the serving stage rather than generic SEO improvements, which is what produces results that broad SEO effort does not reliably produce.

For the broader pipeline framework, the pillar guide on How Google Search Works covers all four stages. The sibling articles on How Google Crawls the Web, How Google Indexes Pages, and How Google Ranks Search Results cover the upstream stages that feed the serving stage with ranked URLs and indexed content.

Frequently Asked Questions

What is the difference between ranking and serving?

Ranking is the calculation of which indexed pages should appear for a given query and in what order. Serving is the rendering of that ranked list into the actual search results page, including decisions about which features to show, which result formats to use, and which content elements to extract. A page can rank well organically and still produce limited visibility if the serving stage selects features that push organic results below the fold. Operators who target serving outcomes deliberately produce visibility that pure ranking optimization cannot match.

How do I get my page into a featured snippet?

Featured snippets pull self-contained content blocks that directly answer the query. The structural patterns that support extraction include clear question-answer formats, defined list structures with descriptive lead-in sentences, and tables with explicit headers. Content that buries the answer inside paragraphs without clear delineation often does not extract cleanly. The page also needs to rank in the top organic positions for the query because featured snippet selection draws from those positions. Strong content structure combined with strong ranking signals is what produces featured snippet placement.

What are AI Overviews and how do they affect my traffic?

AI Overviews are the generative result format that synthesizes information from multiple indexed sources into a single answer at the top of the SERP, with citations to contributing pages. The traffic effects are mixed: cited sources gain visibility through inclusion but may lose click-through traffic because the AI Overview answers the query directly. The variation depends on query type, user intent, and how complete the AI Overview’s answer is. Pages with strong topical authority, content depth, and well-structured definitive content tend to be selected as AI Overview sources.

Why does my page have valid structured data but no rich result?

Rich result eligibility requires more than valid structured data. The system also evaluates whether the markup matches the visible page content, whether all required fields are present, whether the page meets quality thresholds for the rich result type, and whether the query type is one that produces rich results for that content type. Use the Rich Results Test to validate the markup and check Search Console’s Search Appearance section for eligibility status. Eligibility loss often comes from validation errors, missing required fields, or markup that does not match what users see on the page.

How do I optimize for People Also Ask placement?

People Also Ask boxes pull short answer blocks that address specific question-format queries with concise responses. The structure that performs best is an explicit question framed in the page (often as a heading) followed by a direct answer in the first sentence or two of the section. Pages with FAQ sections containing question headings and short answer paragraphs are well-positioned for People Also Ask placement. The answer should be self-contained and definitive enough that the system can extract it without needing surrounding context.

Why do I see different SERP layouts on mobile and desktop?

Layout assembly accounts for the different real estate constraints of mobile and desktop viewports. Mobile screens are narrower, which means each layout element consumes more vertical space relative to the visible viewport. Features that fit comfortably on desktop above the fold can push organic results entirely below the fold on mobile. The layout decisions adjust which features appear and how they are sized for each device type. Operators who only check desktop SERPs miss the mobile visibility patterns where their target users actually search.

Can I rank well organically but still lose visibility?

Yes. The serving stage operates on top of the ranking calculation and can compress organic visibility through feature placement that pushes organic results below the fold. A page ranking number two on a query with a featured snippet, People Also Ask, and AI Overview can produce less visibility than a page ranking number five with strong feature placement. Tracking ranking position alone misses the visibility variation that the layout layer produces. Click-through rate combined with ranking position reveals the actual visibility because CTR responds to where the page appears on the rendered SERP rather than just its ranking number.

How do I track which SERP features appear for my target queries?

Manual SERP audits combined with Search Console’s Search Appearance filter are the diagnostic instruments. Manual audits mean searching the target queries in a controlled environment (incognito browser, location-spoofed) and documenting which features appear at the top of the SERP, between organic results, and in the right-side panel. The Search Appearance filter in Search Console segments performance data by feature type, which makes it visible whether your pages are getting feature placements and which features are producing the most clicks. Combining the two methods produces the feature opportunity map that targeted optimization needs.

What kills SERP feature eligibility?

Common eligibility killers include structured data validation errors, markup that does not match visible content, missing required fields for the rich result type, content structure that does not match featured snippet extraction patterns, weak topical authority for AI Overview consideration, and aggressive interstitials or layout patterns that signal poor user experience. Each feature type has its own eligibility criteria, and pages can be eligible for some features while excluded from others on the same domain. The diagnostic discipline is to identify which feature is producing the eligibility loss and address that specific failure mode.